Category Archives: Social Dynamics

Chaos as a system: New Framework

Posted by Hindol Datta

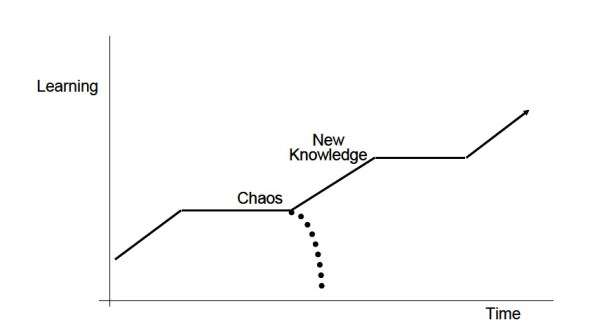

Chaos is not an unordered phenomenon. There is a certain homeostatic mechanism at play that forces a system that might have inherent characteristics of a “chaotic” process to converge to some sort of stability with respect to predictability and parallelism. Our understanding of order which is deemed to be opposite of chaos is the fact that there is a shared consensus that the system will behave in an expected manner. Hence, we often allude to systems as being “balanced” or “stable” or “in order” to spotlight these systems. However, it is also becoming common knowledge in the science of chaos that slight changes in initial conditions in a system can emit variability in the final output that might not be predictable. So how does one straddle order and chaos in an observed system, and what implications does this process have on ongoing study of such systems?

Chaotic systems can be considered to have a highly complex order. It might require the tools of pure mathematics and extreme computational power to understand such systems. These tools have invariably provided some insights into chaotic systems by visually representing outputs as re-occurrences of a distribution of outputs related to a given set of inputs. Another interesting tie up in this model is the existence of entropy, that variable that taxes a system and diminishes the impact on expected outputs. Any system acts like a living organism: it requires oodles of resources to survive and a well-established set of rules to govern its internal mechanism driving the vector of its movement. Suddenly, what emerges is the fact that chaotic systems display some order while subject to an inherent mechanism that softens its impact over time. Most approaches to studying complex and chaotic systems involve understanding graphical plots of fractal nature, and bifurcation diagrams. These models illustrate very complex re occurrences of outputs directly related to inputs. Hence, complex order occurs from chaotic systems.

A case in point would be the relation of a population parameter in the context to its immediate environment. It is argued that a population in an environment will maintain a certain number and there would be some external forces that will actively work to ensure that the population will maintain at that standard number. It is a very Malthusian analytic, but what is interesting is that there could be some new and meaningful influences on the number that might increase the scale. In our current meaning, a change in technology or ingenuity could significantly alter the natural homeostatic number. The fact remains that forces are always at work on a system. Some systems are autonomic – it self-organizes and corrects itself toward some stable convergence. Other systems are not autonomic and once can only resort to the laws of probability to get some insight into the possible outputs – but never to a point where there is a certainty in predictive prowess.

Organizations have a lot of interacting variables at play at any given moment. In order to influence the organization behavior or/and direction, policies might be formulated to bring about the desirable results. However, these nudges toward setting off the organization in the right direction might also lead to unexpected results. The aim is to foresee some of these unexpected results and mollify the adverse consequences while, in parallel, encourage the system to maximize the benefits. So how does one effect such changes?

It all starts with building out an operating framework. There needs to be a clarity around goals and what the ultimate purpose of the system is. Thus there are few objectives that bind the framework.

- Clarity around goals and the timing around achieving these goals. If there is no established time parameter, then the system might jump across various states over time and it would be difficult to establish an outcome.

- Evaluate all of the internal and external factors that might operate in the framework that would impact the success of organizational mandates and direction. Identify stasis or potential for stasis early since that mental model could stem the progress toward a desirable impact.

- Apply toll gates strategically to evaluate if the system is proceeding along the lines of expectation, and any early aberrations are evaluated and the rules are tweaked to get the system to track on a desirable trajectory.

- Develop islands of learning across the path and engage the right talent and other parameters to force adaptive learning and therefore a more autonomic direction to the system.

- Bind the agents and actors in the organization to a shared sense of purpose within the parameter of time.

- Introduce diversity into the framework early in the process. The engagement of diversity allows the system to modulate around a harmonic mean.

- Finally, maintain a well document knowledge base such that the accretive learning that results due to changes in the organization become springboard for new initiatives that reduces the costs of potential failures or latency in execution.

- Encouraging the leadership to ensure that the vector is pointed toward the right direction at any given time.

Once a framework and the engagement rules are drawn out, it is necessary to rely on the natural velocity and self-organization of purposeful agents to move the agenda forward, hopefully with little or no intervention. A mechanism of feedback loops along the way would guide the efficacy of the direction of the system. The implications is that the strategy and the operations must be aligned and reevaluated and positive behavior is encouraged to ensure that the systems meets its objective.

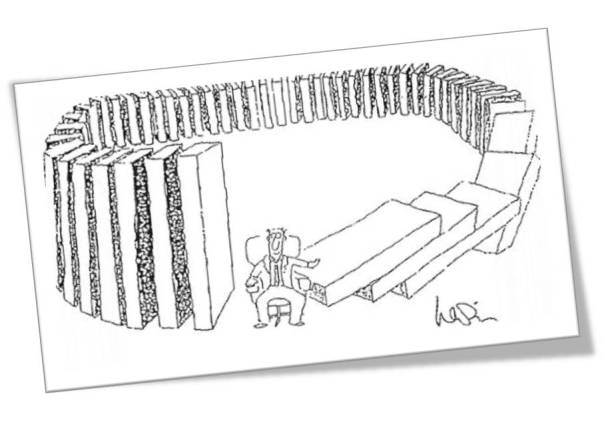

However, as noted above, entropy is a dynamic that often threatens to derail the system objective. There will be external or internal forces constantly at work to undermine system velocity. The operating framework needs to anticipate that real possibility and pre-empt that with rules or introduction of specific capital to dematerialize these occurrences. Stasis is an active agent that can work against the system dynamic. Stasis is the inclination of agents or behaviors that anchors the system to some status quo – we have to be mindful that change might not be embraced and if there are resistors to that change, the dynamic of organizational change can be invariably impacted. It will take a lot more to get something done than otherwise needed. Identifying stasis and agents of stasis is a foundational element

While the above is one example of how to manage organizations in the shadows of the properties of how chaotic systems behave, another example would be the formulation of strategy of the organization in responses to external forces. How do we apply our learnings in chaos to deal with the challenges of competitive markets by aligning the internal organization to external factors? One of the key insights that chaos surfaces is that it is nigh impossible for one to fully anticipate all of the external variables, and leaving the system to dynamically adapt organically to external dynamics would allow the organization to thrive. To thrive in this environment is to provide the organization to rapidly change outside of the traditional hierarchical expectations: when organizations are unable to make those rapid changes or make strategic bets in response to the external systems, then the execution value of the organization diminishes.

Margaret Wheatley in her book Leadership and the New Science: Discovering Order in a Chaotic World Revised says, “Organizations lack this kind of faith, faith that they can accomplish their purposes in various ways and that they do best when they focus on direction and vision, letting transient forms emerge and disappear. We seem fixated on structures…and organizations, or we who create them, survive only because we build crafty and smart—smart enough to defend ourselves from the natural forces of destruction. Karl Weick, an organizational theorist, believes that “business strategies should be “just in time…supported by more investment in general knowledge, a large skill repertoire, the ability to do a quick study, trust in intuitions, and sophistication in cutting losses.”

We can expand the notion of a chaos in a system to embrace the bigger challenges associated with environment, globalization, and the advent of disruptive technologies.

One of the key challenges to globalization is how policy makers would balance that out against potential social disintegration. As policies emerge to acknowledge the benefits and the necessity to integrate with a new and dynamic global order, the corresponding impact to local institutions can vary and might even lead to some deleterious impact on those institutions. Policies have to encourage flexibility in local institutional capability and that might mean increased investments in infrastructure, creating a diverse knowledge base, establishing rules that govern free but fair trading practices, and encouraging the mobility of capital across borders. The grand challenges of globalization is weighed upon by government and private entities that scurry to create that continual balance to ensure that the local systems survive and flourish within the context of the larger framework. The boundaries of the system are larger and incorporates many more agents which effectively leads to the real possibility of systems that are difficult to be controlled via a hierarchical or centralized body politic Decision making is thus pushed out to the agents and actors but these work under a larger set of rules. Rigidity in rules and governance can amplify failures in this process.

Related to the realities of globalization is the advent of the growth in exponential technologies. Technologies with extreme computational power is integrating and create robust communication networks within and outside of the system: the system herein could represent nation-states or companies or industrialization initiatives. Will the exponential technologies diffuse across larger scales quickly and will the corresponding increase in adoption of new technologies change the future of the human condition? There are fears that new technologies would displace large groups of economic participants who are not immediately equipped to incorporate and feed those technologies into the future: that might be on account of disparity in education and wealth, institutional policies, and the availability of opportunities. Since technologies are exponential, we get a performance curve that is difficult for us to understand. In general, we tend to think linearly and this frailty in our thinking removes us from the path to the future sooner than later. What makes this difficult is that the exponential impact is occurring across various sciences and no one body can effectively fathom the impact and the direction. Bill Gates says it well “We always overestimate the change that will occur in the next two years and underestimate the change that will occur in the next ten. Don’t let yourself be lulled into inaction.” Does chaos theory and complexity science arm us with a differentiated tool set than the traditional toolset of strategy roadmaps and product maps? If society is being carried by the intractable and power of the exponent in advances in technology, than a linear map might not serve to provide the right framework to develop strategies for success in the long-term. Rather, a more collaborative and transparent roadmap to encourage the integration of thoughts and models among the actors who are adapting and adjusting dynamically by the sheer force of will would perhaps be an alternative and practical approach in the new era.

Lately there has been a lot of discussion around climate change. It has been argued, with good reason and empirical evidence, that environment can be adversely impacted on account of mass industrialization, increase in population, resource availability issues, the inability of the market system to incorporate the cost of spillover effects, the adverse impact of moral hazard and the theory of the commons, etc. While there are demurrers who contest the long-term climate change issues, the train seems to have already left the station! The facts do clearly reflect that the climate will be impacted. Skeptics might argue that science has not yet developed a precise predictive model of the weather system two weeks out, and it is foolhardy to conclude a dystopian future on climate fifty years out. However, the alternative argument is that our inability to exercise to explain the near-term effects of weather changes and turbulence does not negate the existence of climate change due to the accretion of greenhouse impact. Boiling a pot of water will not necessarily gives us an understanding of all of the convection currents involved among the water molecules, but it certainly does not shy away from the fact that the water will heat up.

Posted in Business Process, Chaos, Complexity, emergent systems, exponential, growth, Innovation, Leadership, Learning Organization, Learning Process, Model Thinking, Narratives, Order, Organization Architecture, scale, Social Dynamics, Social Systems

Comments Off on Chaos as a system: New Framework

Tags: chaos, Complexity, environment, innovation, learning organization, order, platform, systems, transparency

The Law of Unintended Consequences

Posted by Hindol Datta

The Law of Unintended Consequence is that the actions of a central body that might claim omniscient, omnipotent and omnivalent intelligence might, in fact, lead to consequences that are not anticipated or unintended.

The concept of the Invisible Hand as introduced by Adam Smith argued that it is the self-interest of all the market agents that ultimately create a system that maximizes the good for the greatest amount of people.

Robert Merton, a sociologist, studied the law of unintended consequence. In an influential article titled “The Unanticipated Consequences of Purposive Social Action,” Merton identified five sources of unanticipated consequences.

Ignorance makes it difficult and impossible to anticipate the behavior of every element or the system which leads to incomplete analysis.

Errors that might occur when someone uses historical data and applies the context of history into the future. Linear thinking is a great example of an error that we are wrestling with right now – we understand that there are systems, looking back, that emerge exponentially but it is hard to decipher the outcome unless one were to take a leap of faith.

Biases work its way into the study as well. We study a system under the weight of our biases, intentional or unintentional. It is hard to strip that away even if there are different bodies of thought that regard a particular system and how a certain action upon the system would impact it.

Weaved with the element of bias is the element of basic values that may require or prohibit certain actions even if the long-term impact is unfavorable. A good example would be the toll gates established by the FDA to allow drugs to be commercialized. In its aim to provide a safe drug, the policy might be such that the latency of the release of drugs for experiments and commercial purposes are so slow that many patients who might otherwise benefit from the release of the drug lose out.

Finally, he discusses the self-fulfilling prophecy which suggests that tinkering with the elements of a system to avert a catastrophic negative event might in actuality result in the event.

It is important however to acknowledge that unintended consequences do not necessarily lead to a negative outcome. In fact, there are could be unanticipated benefits. A good example is Viagra which started off as a pill to lower blood pressure, but one discovered its potency to solve erectile dysfunctions. The discovery that ships that were sunk became the habitat and formation of very rich coral reefs in shallow waters that led scientists to make new discoveries in the emergence of flora and fauna of these habitats.

If there are initiatives exercised that are considered “positive initiative” to influence the system in a manner that contribute to the greatest good, it is often the case that these positive initiatives might prove to be catastrophic in the long term. Merton calls the cause of this unanticipated consequence as something called the product of the “relevance paradox” where decision makers thin they know their areas of ignorance regarding an issue, obtain the necessary information to fill that ignorance gap but intentionally or unintentionally neglect or disregard other areas as its relevance to the final outcome is not clear or not lined up to values. He goes on to argue, in a nutshell, that unintended consequences relate to our hubris – we are hardwired to put our short-term interest over long term interest and thus we tinker with the system to surface an effect which later blow back in unexpected forms. Albert Camus has said that “The evil in the world almost always comes of ignorance, and good intentions may do as much harm as malevolence if they lack understanding.”

An interesting emergent property that is related to the law of unintended consequence is the concept of Moral Hazard. It is a concept that individuals have incentives to alter their behavior when their risk or bad decision making is borne of diffused among others. For example:

If you have an insurance policy, you will take more risks than otherwise. The cost of those risks will impact the total economics of the insurance and might lead to costs being distributed from the high-risk takers to the low risk takers.

How do the conditions of the moral hazard arise in the first place? There are two important conditions that must hold. First, one party has more information than another party. The information asymmetry thus creates gaps in information and that creates a condition of moral hazard. For example, during 2006 when sub-prime mortgagors extended loans to individuals who had dubitable income and means to pay. The Banks who were buying these mortgages were not aware of it. Thus, they ended up holding a lot of toxic loans due to information asymmetry. Second, is the existence of an understanding that might affect the behavior of two agents. If a child knows that they are going to get bailed out by the parents, he/she might take some risks that he/she would otherwise might not have taken.

To counter the possibility of unintended consequences, it is important to raise our thinking to second-order thinking. Most of our thinking is simplistic and is based on opinions and not too well grounded in facts. There are a lot of biases that enter first order thinking and in fact, all of the elements that Merton touches on enters it – namely, ignorance, biases, errors, personal value systems and teleological thinking. Hence, it is important to get into second-order thinking – namely, the reasoning process is surfaced by looking at interactions of elements, temporal impacts and other system dynamics. We had mentioned earlier that it is still difficult to fully wrestle all the elements of emergent systems through the best of second-order thinking simply because the dynamics of a complex adaptive system or complex physical system would deny us that crown of competence. However, this fact suggests that we step away from simple, easy and defendable heuristics to measure and gauge complex systems.

Posted in Business Process, emergent systems, Learning Organization, Learning Process, Management Models, Order, Social Dynamics, Unitended Consequence

Comments Off on The Law of Unintended Consequences

Tags: CAS, Complexity, experiments, innovation, intuition, open source, slippery slope, Systems design, Systems Thinking, uncertainty, unintended consequence

Complex Physical and Adaptive Systems

Posted by Hindol Datta

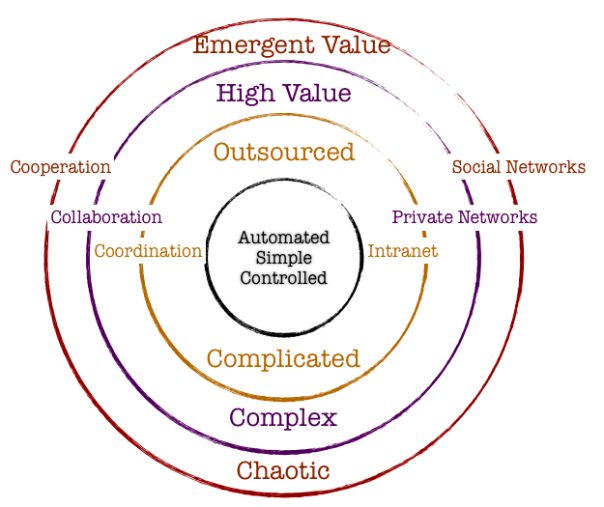

There are two models in complexity. Complex Physical Systems and Complex Adaptive Systems! For us to grasp the patterns that are evolving, and much of it seemingly out of our control – it is important to understand both these models. One could argue that these models are mutually exclusive. While the existing body of literature might be inclined toward supporting that argument, we also find some degree of overlap that makes our understanding of complexity unstable. And instability is not to be construed as a bad thing! We might operate in a deterministic framework, and often, we might operate in the realms of a gradient understanding of volatility associated with outcomes. Keeping this in mind would be helpful as we deep dive into the two models. What we hope is that our understanding of these models would raise questions and establish mental frameworks for intentional choices that we are led to make by the system or make to influence the evolution of the system.

Complex Physical Systems (CPS)

Complex Physical Systems are bounded by certain laws. If there are initial conditions or elements in the system, there is a degree of predictability and determinism associated with the behavior of the elements governing the overarching laws of the system. Despite the tautological nature of the term (Complexity Physical System) which suggests a physical boundary, the late 1900’s surfaced some nuances to this model. In other words, if there is a slight and an arbitrary variation in the initial conditions, the outcome could be significantly different from expectations. The assumption of determinism is put to the sword. The notion that behaviors will follow established trajectories if rules are established and the laws are defined have been put to test. These discoveries by introspection offers an insight into the developmental block of complex physical systems and how a better understanding of it will enable us to acknowledge such systems when we see it and thereafter allow us to establish certain toll-gates and actions to navigate, to the extent possible, to narrow the region of uncertainty around outcomes.

The universe is designed as a complex physical system. Just imagine! Let this sink in a bit. A complex physical system might be regarded relatively simpler than a complex adaptive system. And with that in mind, once again …the universe is a complex physical system. We are awed by the vastness and scale of the universe, we regard the skies with an illustrious reverence and we wonder and ruminate on what lies beyond the frontiers of a universe, if anything. Really, there is nothing bigger than the universe in the physical realm and yet we regard it as a simple system. A “Simple” Complex Physical System. In fact, the behavior of ants that lead to the sustainability of an ant colony, is significantly more complex: and we mean by orders of magnitude.

Complexity behavior in nature reflects the tendency of large systems with many components to evolve into a poised “critical” state where minor disturbances or arbitrary changes in initial conditions can create a seemingly catastrophic impact on the overall system such that system changes significantly. And that happens not by some invisible hand or some uber design. What is fundamental to understanding complex systems is to understand that complexity is defined as the variability of the system. Depending on our lens, the scale of variability could change and that might lead to different apparatus that might be required to understand the system. Thus, determinism is not the measure: Stephen Jay Gould has argued that it is virtually impossible to predict the future. We have hindsight explanatory powers but not predictable powers. Hence, systems that start from the initial state over time might represent an outcome that is distinguishable in form and content from the original state. We see complex physical systems all around us. Snowflakes, patterns on coastlines, waves crashing on a beach, rain, etc.

Complex Adaptive Systems (CAS)

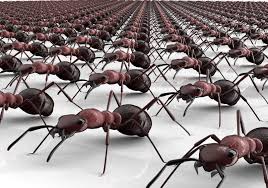

Complex adaptive systems, on the contrary, are learning systems that evolve. They are composed of elements which are called agents that interact with one another and adapt in response to the interactions.

Markets are a good example of complex adaptive systems at work.

CAS agents have three levels of activity. As described by Johnson in Complexity Theory: A Short Introduction – the three levels of activity are:

- Performance (moment by moment capabilities): This establishes the locus of all behavioral elements that signify the agent at a given point of time and thereafter establishes triggers or responses. For example, if an object is approaching and the response of the agent is to run, that would constitute a performance if-then outcome. Alternatively, it could be signals driven – namely, an ant emits a certain scent when it finds food: other ants will catch on that trail and act, en masse, to follow the trail. Thus, an agent or an actor in an adaptive system has detectors which allows them to capture signals from the environment for internal processing and it also has the effectors that translate the processing to higher order signals that influence other agents to behave in certain ways in the environment. The signal is the scent that creates these interactions and thus the rubric of a complex adaptive system.

- Credit assignment (rating the usefulness of available capabilities): When the agent gathers experience over time, the agent will start to rely heavily on certain rules or heuristics that they have found useful. It is also typical that these rules may not be the best rules, but it could be rules that are a result of first discovery and thus these rules stay. Agents would rank these rules in some sequential order and perhaps in an ordinal ranking to determine what is the best rule to fall back on under certain situations. This is the crux of decision making. However, there are also times when it is difficult to assign a rank to a rule especially if an action is setting or laying the groundwork for a future course of other actions. A spider weaving a web might be regarded as an example of an agent expending energy with the hope that she will get some food. This is a stage setting assignment that agents have to undergo as well. One of the common models used to describe this best is called the bucket-brigade algorithm which essentially states that the strength of the rule depends on the success of the overall system and the agents that constitute it. In other words, all the predecessors and successors need to be aware of only the strengths of the previous and following agent and that is done by some sort of number assignment that becomes stronger from the beginning of the origin of the system to the end of the system. If there is a final valuable end-product, then the pathway of the rules reflect success. Once again, it is conceivable that this might not be the optimal pathway but a satisficing pathway to result in a better system.

- Rule discovery (generating new capabilities): Performance and credit assignment in agent behavior suggest that the agents are governed by a certain bias. If the agents have been successful following certain rules, they would be inclined toward following those rules all the time. As noted, rules might not be optimal but satisficing. Is improvement a matter of just incremental changes to the process? We do see major leaps in improvement … so how and why does this happen? In other words, someone in the process have decided to take a different rule despite their experiences. It could have been an accident or very intentional.

One of the theories that have been presented is that of building blocks. CAS innovation is a result of reconfiguring the various components in new ways. One quips that if energy is neither created, nor destroyed …then everything that exists today or will exist tomorrow is nothing but a reconfiguration of energy in new ways. All of tomorrow resides in today … just patiently waiting to be discovered. Agents create hypotheses and experiment in the petri dish by reconfiguring their experiences and other agent’s experiences to formulate hypotheses and the runway for discovery. In other words, there is a collaboration element that comes into play where the interaction of the various agents and their assignment as a group to a rule also sets the stepping stone for potential leaps in innovation.

Another key characteristic of CAS is that the elements are constituted in a hierarchical order. Combinations of agents at a lower level result in a set of agents higher up and so on and so forth. Thus, agents in higher hierarchical orders take on some of the properties of the lower orders but it also includes the interaction rules that distinguishes the higher order from the lower order.

Posted in Business Process, Chaos, Complexity, Innovation, Learning Organization, Learning Process, Model Thinking, Order, Organization Architecture, Social Dynamics

Comments Off on Complex Physical and Adaptive Systems

Tags: adaptive, CAS, Complexity, CPS, creativity, economics, innovation, open source, platform, Systems Thinking, transparency, uncertainty

Short History of Complexity

Posted by Hindol Datta

Complexity theory began in the 1930’s when natural scientists and mathematicians rallied together to get a deeper understanding of how systems emerge and plays out over time. However, the groundwork of complexity theory began in the 1850’s with Darwin’s introduction to Natural Selection. It was further extended by Mendel’s genetic algorithms. Darwin’s Theory of Evolution has been posited as a slow gradual process. He says that “Natural selection acts only by taking advantage of slight successive variations; she can never take a great and sudden leap, but must advance by short and sure, though slow steps.” Thus, he concluded that complex systems evolve by leaps and the result is an organic formulation of an irreducibly complex system which is composed of many parts, all of which work together closely for the overall system to function. If any part is missing or does not act as expected, then the system becomes unwieldy and breaks down. So it was an early foray into distinguishing the emergent property of a system from the elements that constitute it. Mendel, on the other hand, laid out the property of inheritance across generations. An organic system inherits certain traits that are reconfigured over time and adapts to the environment, thus leading to the development of an organism which for our purposes fall in the realm of a complex outcome. One would imagine that there is a common thread between Darwin’s Natural Selection and Mendel’s laws of genetic inheritance. But that is not the case and that has wide implications in complexity theory. Mendel focused on how the traits are carried across time: the mechanics which are largely determined by some probabilistic functions. The underlying theory of Mendel hinted at the possibility that a complex system is a result of discrete traits that are passed on while Darwin suggests that complexity arises due continuous random variations.

In the 1920’s, literature suggested that a complex system has elements of both: continuous adaptation and discrete inheritance that is hierarchical in nature. A group of biologists reconciled the theories into what is commonly known as the Modern Synthesis. The principles guiding Modern Synthesis were: Natural Selection was the major mechanism for evolutionary change. Small random variations of genes and natural selection result in the origin of new species. Furthermore, the new species might have properties different than the elements that constitute. Modern Synthesis thus provided the framework around Complexity theory. What does this great debate mean for our purposes? Once we arrive at determining whether a system is complex, then how does the debate shed more light into our understanding of complexity. Does this debate shed light into how we regard complexity and how we subsequently deal with it? We need to further extend our thinking by looking at a few new developments that occurred in the 20th century that would give us a better perspective. Let us then continue our journey into the evolution of the thinking around complexity.

Axioms are statements that are self-evident. It serves to be a premise or starting point for further reasoning and arguments. An axiom thus is not contestable because if it, then all the following reasoning that is extended against axioms would fall apart. Thus, for our purposes and our understanding of complexity theory – A complex system has an initial state that is irreducible physically or mathematically.

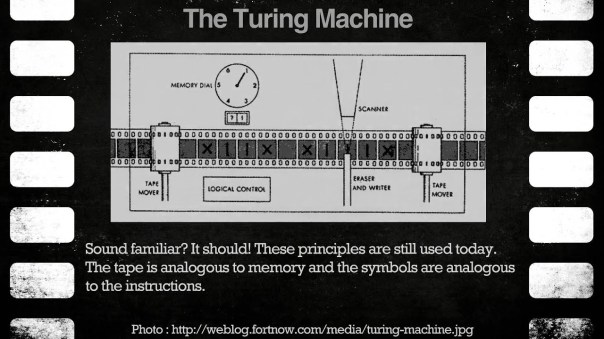

One of the key elements in Complexity is computation or computability. In the 1930’s, Turing introduced the abstract concept of the Turing machine. There is a lot of literature that goes into the specifics of how the machine works but that is beyond the scope of this book. However, there are key elements that can be gleaned from that concept to better understand complex systems. A complex system that evolves is a result of a finite number of steps that would solve a specific challenge. Although the concept has been applied in the boundaries of computational science, I am taking the liberty to apply this to emerging complex systems. Complexity classes help scientists categorize the problems based on how much time and space is required to solve problems and verify solutions. The complexity is thus a function of time and memory. This is a very important concept and we have radically simplified the concept to attend to a self-serving purpose: understand complexity and how to solve the grand challenges? Time complexity refers to the number of steps required to solve a problem. A complex system might not necessarily be the most efficient outcome but is nonetheless an outcome of a series of steps, backward and forward to result in a final state. There are pathways or efficient algorithms that are produced and the mechanical states to produce them are defined and known. Space complexity refers to how much memory that the algorithm depends on to solve the problem. Let us keep these concepts in mind as we round this all up into a more comprehensive work that we will relay at the end of this chapter.

Around the 1940’s, John von Neumann introduced the concept of self-replicating machines. Like Turing, Von Neumann’s would design an abstract machine which, when run, would replicate itself. The machine consists of three parts: a ‘blueprint’ for itself, a mechanism that can read any blueprint and construct the machine (sans blueprint) specified by that blueprint, and a ‘copy machine’ that can make copies of any blueprint. After the mechanism has been used to construct the machine specified by the blueprint, the copy machine is used to create a copy of that blueprint, and this copy is placed into the new machine, resulting in a working replication of the original machine. Some machines will do this backwards, copying the blueprint and then building a machine. The implications are significant. Can complex systems regenerate? Can they copy themselves and exhibit same behavior and attributes? Are emergent properties equivalent? Does history repeat itself or does it rhyme? How does this thinking move our understanding and operating template forward once we identify complex systems?

Let us step forward into the late 1960’s when John Conway started doing experiments extending the concept of the cellular automata. He introduced the concept of the Game of Life in 1970 as a result of his experiments. His main theses was simple : The game is a zero-player game, meaning that its evolution is determined by its initial state, requiring no further input. One interacts with the Game of Life by creating an initial configuration and observing how it evolves, or, for advanced players, by creating patterns with properties. The entire formulation was done on a two-dimensional universe in which patterns evolved over time. It is one of the finest examples in science of how a set of few simple non-arbitrary rules can result in an incredibly complex behavior that is fluid and provides a pleasing pattern over time. In other words, if one were an outsider looking in, you would see a pattern emerging from simple initial states and simple rules. We encourage you to look at several patterns that many people have constructed using different Game of Life parameters. The main elements are as follows. A square grid contains cells that are alive or dead. The behavior of each cell is dependent on the state of its eight immediate neighbors. Eight is an arbitrary number that Conway established to keep the model simple. These cells will strictly follow the rules.

Live Cells:

- A live cell with zero or one live neighbors will die

- A live cell with two or three live neighbors will remain alive

- A live cell with four or more live neighbors will die.

Dead Cells:

- A dead cell with exactly three live neighbors becomes alive

- In all other cases a dead cell will stay dead.

Thus, what his simulation led to is the determination that life is an example of emergence and self-organization. Complex patterns can emerge from the implementation of very simple rules. The game of life thus encourages the notion that “design” and “organization” can spontaneously emerge in the absence of a designer.

Stephen Wolfram introduced the concept of a Class 4 cellular automata of which the Rule of 110 is well known and widely studied. The Class 4 automata validates a lot of the thinking grounding complexity theory. He proves that certain patterns emerge from initial conditions that are not completely random or regular but seems to hint at an order and yet the order is not predictable. Applying a simple rule repetitively to the simplest possible starting point would bode the emergence of a system that is orderly and predictable: but that is far from the truth. The resultant state is that the results exhibit some randomness and yet produce patters with order and some intelligence.

Thus, his main conclusion from his discovery is that complexity does not have to beget complexity: simple forms following repetitive and deterministic rules can result in systems that exhibit complexity that is unexpected and unpredictable. However, he sidesteps the discussion around the level of complexity that his Class 4 automata generates. Does this determine or shed light on evolution, how human beings are formed, how cities evolve organically, how climate is impacted and how the universe undergoes change? One would argue that is not the case. However, if you take into account Darwin’s natural selection process, the Mendel’s law of selective genetics and its corresponding propitiation, the definitive steps proscribed by the Turing machine that captures time and memory, Von Neumann’s theory of machines able to replicate themselves without any guidance, and Conway’s force de tour in proving that initial conditions without any input can create intelligent systems – you essentially can start connecting the dots to arrive at a core conclusion: higher order systems can organically create itself from initial starting conditions naturally. They exhibit a collective intelligence which is outside the boundaries of precise prediction. In the previous chapter we discussed complexity and we introduced an element of subjective assessment to how we regard what is complex and the degree of complexity. Whether complexity falls in the realm of a first-person subjective phenomenon or a scientific third-party objective phenomenon has yet to be ascertained. Yet it is indisputable that the product of a complex system might be considered a live pattern of rules acting upon agents to cause some deterministic but random variation.

Posted in Chaos, Complexity, Innovation, Learning Process, Model Thinking, Order, Organization Architecture, Social Dynamics

Comments Off on Short History of Complexity

Comparative Literature and Business Insights

Posted by Hindol Datta

“Literature is the art of discovering something extraordinary about ordinary people, and saying with ordinary words something extraordinary.” – Boris Pasternak

“It is literature which for me opened the mysterious and decisive doors of imagination and understanding. To see the way others see. To think the way others think. And above all, to feel.” – Salman Rushdie

There is a common theme that cuts across literature and business. It is called imagination!

Great literature seeds the mind to imagine faraway places across times and unique cultures. When we read a novel, we are exposed to complex characters that are richly defined and the readers’ subjective assessment of the character and the context defines their understanding of how the characters navigate the relationships and their environment. Great literature offers many pauses for thought, and long after the book is read through … the theme gently seeps in like silt in the readers’ cumulative experiences. It is in literature that the concrete outlook of humanity receives its expression. Comparative literature which is literature assimilated across many different countries enable a diversity of themes that intertwine into the readers’ experiences augmented by the reality of what they immediately experience – home, work, etc. It allows one to not only be capable of empathy but also … to craft out the fluid dynamics of ever changing concepts by dipping into many different types of case studies of human interaction. The novel and the poetry are the bulwarks of literature. It is as important to study a novel as it is to enjoy great poetry. The novel characterizes a plot/(s) and a rich tapestry of actions of the characters that navigates through these environments: the poetry is the celebration of the ordinary into extraordinary enactments of the rhythm of the language that transport the readers, through images and metaphor, into single moments. It breaks the linear process of thinking, a perpendicular to a novel.

Business insights are generally a result of acute observation of trends in the market, internal processes, and general experience. Some business schools practice case study method which allows the student to have a fairly robust set of data points to fall back upon. Some of these case studies are fairly narrow but there are some that gets one to think about personal dynamics. It is a fact that personal dynamics and biases and positioning plays a very important role in how one advocates, views, or acts upon a position. Now the schools are layering in classes on ethics to understand that there are some fundamental protocols of human nature that one has to follow: the famous adage – All is fair in love and war – has and continues to lose its edge over time. Globalization, environmental consciousness, individual rights, the idea of democracy, the rights of fair representation, community service and business philanthropy are playing a bigger role in today’s society. Thus, business insights today are a result of reflection across multiple levels of experience that encompass not the company or the industry …but encompass a broader array of elements that exercises influence on the company direction. In addition, one always seeks an end in mind … they perpetually embrace a vision that is impacted by their judgments, observations and thoughts. Poetry adds the final wing for the flight into this metaphoric realm of interconnections – for that is always what a vision is – a semblance of harmony that inspires and resurrects people to action.

I contend that comparative literature is a leading indicator that allows a person to get a feel for the general direction of the express and latent needs of people. Furthermore, comparative literature does not offer a solution. Great literature does not portend a particular end. They leave open a multitude of possibilities and what-ifs. The reader can literally transport themselves into the environment and wonder at how he/she would act … the jump into a vicarious existence steeps the reader into a reflection that sharpens the intellect. This allows the reader in a business to be better positioned to excavate and address the needs of current and potential customers across boundaries.

“Literature gives students a much more realistic view of what’s involved in leading” than many business books on leadership, said the professor. “Literature lets you see leaders and others from the inside. You share the sense of what they’re thinking and feeling. In real life, you’re usually at some distance and things are prepared, polished. With literature, you can see the whole messy collection of things that happen inside our heads.” – Joseph L. Badaracco, the John Shad Professor of Business Ethics at Harvard Business School (HBS)

Aaron Swartz took down a piece of the Berlin Wall! We have to take it all down!

Posted by Hindol Datta

“The world’s entire scientific … heritage … is increasingly being digitized and locked up by a handful of private corporations… The Open Access Movement has fought valiantly to ensure that scientists do not sign their copyrights away but instead ensure their work is published on the Internet, under terms that allow anyone to access it.” – Aaron Swartz

Information, in the context of scholarly articles by research at universities and think-tanks, is not a zero sum game. In other words, one person cannot have more without having someone have less. When you start creating “Berlin” walls in the information arena within the halls of learning, then learning itself is compromised. In fact, contributing or granting the intellectual estate into the creative commons serves a higher purpose in society – an access to information and hence, a feedback mechanism that ultimately enhances the value to the end-product itself. How? Since now the product has been distributed across a broader and diverse audience, and it is open to further critical analyses.

The universities have built a racket. They have deployed a Chinese wall between learning in a cloistered environment and the world who are not immediate participants. The Guardian wrote an interesting article on this matter and a very apt quote puts it all together.

“Academics not only provide the raw material, but also do the graft of the editing. What’s more, they typically do so without extra pay or even recognition – thanks to blind peer review. The publishers then bill the universities, to the tune of 10% of their block grants, for the privilege of accessing the fruits of their researchers’ toil. The individual academic is denied any hope of reaching an audience beyond university walls, and can even be barred from looking over their own published paper if their university does not stump up for the particular subscription in question.

This extraordinary racket is, at root, about the bewitching power of high-brow brands. Journals that published great research in the past are assumed to publish it still, and – to an extent – this expectation fulfils itself. To climb the career ladder academics must get into big-name publications, where their work will get cited more and be deemed to have more value in the philistine research evaluations which determine the flow of public funds. Thus they keep submitting to these pricey but mightily glorified magazines, and the system rolls on.”

http://www.guardian.co.uk/commentisfree/2012/apr/11/academic-journals-access-wellcome-trust

JSTOR is a not-for-profit organization that has invested heavily in providing an online system for archiving, accessing, and searching digitized copies of over 1,000 academic journals. More recently, I noticed some effort on their part to allow public access to only 3 articles over a period of 21 days. This stinks! This policy reflects an intellectual snobbery beyond Himalayan proportions. The only folks that have access to these academic journals and studies are professors, and researchers that are affiliated with a university and university libraries. Aaron Swartz noted the injustice of hoarding such knowledge and tried to distribute a significant proportion of JSTOR’s archive through one or more file-sharing sites. And what happened thereafter was perhaps one of the biggest misapplication of justice. The same justice that disallows asymmetry of information in Wall Street is being deployed to preserve the asymmetry of information at the halls of learning.

MSNBC contributor Chris Hayes criticized the prosecutors, saying “at the time of his death Aaron was being prosecuted by the federal government and threatened with up to 35 years in prison and $1 million in fines for the crime of—and I’m not exaggerating here—downloading too many free articles from the online database of scholarly work JSTOR.”

The Associated Press reported that Swartz’s case “highlights society’s uncertain, evolving view of how to treat people who break into computer systems and share data not to enrich themselves, but to make it available to others.”

Chris Soghioian, a technologist and policy analyst with the ACLU, said, “Existing laws don’t recognize the distinction between two types of computer crimes: malicious crimes committed for profit, such as the large-scale theft of bank data or corporate secrets; and cases where hackers break into systems to prove their skillfulness or spread information that they think should be available to the public.”

Kelly Caine, a professor at Clemson University who studies people’s attitudes toward technology and privacy, said Swartz “was doing this not to hurt anybody, not for personal gain, but because he believed that information should be free and open, and he felt it would help a lot of people.”

And then there were some modest reservations, and Swartz actions were attributed to reckless judgment. I contend that this does injustice to someone of Swartz’s commitment and intellect … the recklessness was his inability to grasp the notion that an imbecile in the system would pursue 35 years of imprisonment and $1M fine … it was not that he was not aware of what he was doing but he believed, as does many, that scholarly academic research should be available as a free for all.

We have a Berlin wall that needs to be taken down. Swartz started that but he was unable to keep at it. It is important to not rest in this endeavor and that everyone ought to actively petition their local congressman to push bills that will allow open access to these academic articles.

John Maynard Keynes had warned of the folly of “shutting off the sun and the stars because they do not pay a dividend”, because what is at stake here is the reach of the light of learning. Aaron was at the vanguard leading that movement, and we should persevere to become those points of light that will enable JSTOR to disseminate the information that they guard so unreservedly.

LinkedIn Endorsements: A Failure or a Brilliant Strategy?

Posted by Hindol Datta

LinkedIn endorsements have no value. So says many pundits! Here are some interesting articles that speaks of the uselessness of this product feature in LinkedIn.

http://www.businessinsider.com/linkedin-drops-endorsements-by-year-end-2013-3

http://mashable.com/2013/01/03/linkedins-endorsements-meaningless/

I have some opinions on this matter. I started a company last year that allows people within and outside of the company to recommend professionals based on projects. We have been ushered into a world where our jobs, for the most part, constitute a series of projects that are undertaken over the course of a person’s career. The recognition system around this granular element is lacking; we have recommendations and recognition systems that have been popularized by LinkedIn, Kudos, Rypple, etc. But we have not seen much development in tools that address recognition around projects in the public domain. I foresee the possibility of LinkedIn getting into this space soon. Why? It is simple. The answer is in their “useless” Endorsement feature that has been on since late last year. As of March 13, one billion endorsements have been given to 56 million LinkedIn members, an average of about 4 per person. What does this mean? It means that LinkedIn has just validated a potential feature which will add more flavor to the endorsements – Why have you granted these endorsements in the first place?

Thus, it stands to reason the natural step is to reach out to these endorsers by providing them appropriate templates to add more flavor to the endorsements. Doing so will force a small community of the 56 million participants to add some flavor. Even if that constitutes 10%, that is almost 5.6M members who are contributing to this feature. Now how many products do you know that release one feature and very quickly gather close to six million active participants to use it? In addition, this would only gain force since more and more people would use this feature and all of a sudden … the endorsements become a beachhead into a very strategic product.

The other area that LinkedIn will probably step into is to catch the users young. Today it happens to be professionals; I will not be surprised if they start moving into the university/college space and what is a more effective way to bridge than to position a product that recognizes individuals against projects the individuals have collaborated on.

LinkedIn and Facebook are two of the great companies of our time and they are peopled with incredibly smart people. So what may seemingly appear as a great failure in fact will become the enabler of a successful product that will significantly increase the revenue streams of LinkedIn in the long run!

Darkness at Noon in Facebook!

Posted by Hindol Datta

Facebook began with a simple thesis: Connect Friends. That was the sine qua non of its existence. From a simple thesis to an effective UI design, Facebook has grown over the years to become the third largest community in the world. But as of the last few years they have had to resort to generating revenue to meet shareholder expectations. Today it is noon at Facebook but there is the long shadow of darkness that I posit have fallen upon perhaps one of the most influential companies in history.

The fact is that leaping from connecting friends to managing the conversations allows Facebook to create this petri dish to understand social interactions at large scale eased by their fine technology platform. To that end, they are moving into alternative distribution channels to create broader reach into global audience and to gather deeper insights into the interaction templates of the participants. The possibilities are immense: in that, this platform can be a collaborative beachhead into discoveries, exploration, learning, education, social and environmental awareness and ultimately contribute to elevated human conscience. But it has faltered, perhaps the shareholders and the analysts are much to blame, on account of the fangled existence of market demands and it has become one global billboard for advertisers to promote their brands. Darkness at noon is the most appropriate metaphor to reflect Facebook as it is now.

Let us take a small turn to briefly look at some of other very influential companies that have not been as much derailed as has Facebook. The companies are Twitter, Google and LinkedIn. Each of them are the leaders in their category, and all of them have moved toward monetization schemes from their specific user base. Each of them has weighed in significantly in their respective categories to create movements that have or will affect the course of the future. We all know how Twitter has contributed to super-fast news feeds globally that have spontaneously generated mass coalescence around issues that make a difference; Google has been an effective tool to allow an average person to access information; and LinkedIn has created professional and collaborative environment in the professional space. Thus, all three of these companies, despite supplementing fully their appetite for revenue through advertising, have not compromised their quintessence for being. Now all of these companies can definitely move their artillery to encompass the trajectory of FB but that would be a steep hill to climb. Furthermore, these companies have an aura associated within their categories: attempts to move out of their category have been feeble at best, and in some instances, not successful. Facebook has a phenomenal chance of putting together what they have to create a communion of knowledge and wisdom. And no company exists in the market better suited to do that at this point.

One could counter that Facebook sticks to its original vision and that what we have today is indeed what Facebook had planned for all along since the beginning. I don’t disagree. My point of contention in this matter is that though is that Facebook has created this informal and awesome platform for conversations and communities among friends, it has glossed over the immense positive fallout that could occur as a result of these interactions. And that is the development and enhancement of knowledge, collaboration, cultural play, encourage a diversity of thought, philanthropy, crowd sourcing scientific and artistic breakthroughs, etc. In other words, the objective has been met for the most part. Thank you Mark! Now Facebook needs to usher in a renaissance in the courtyard. Facebook needs to find a way out of the advertising morass that has shed darkness over all the product extensions and launches that have taken place over the last 2 years: Facebook can force a point of inflection to quadruple its impact on the course of history and knowledge. And the revenue will follow!

Posted in Corporate Social Responsibility, Employee Engagement, Innovation, Learning Organization, Learning Process, Narratives, Social Causes, Social Dynamics, Social Network, Social Systems

Tags: connection, conversation, crowdsource, democracy, diversity, experiments, social network, social systems

Why Jugglestars? How will this benefit you?

Posted by Hindol Datta

Consider this. Your professional career is a series of projects. Employers look for accountability and performance, and they measure you by how you fare on your projects. Everything else, for the most part, is white noise. The projects you work on establish your skill set and before long – your career trajectory. However, all the great stuff that you have done at work is for the most part hidden from other people in your company or your professional colleagues. You may get a recommendation on LinkedIn, which is fairly high-level, or you may receive endorsements for your skills, which is awesome. But the Endorsements on LinkedIn seem a little random, don’t they? Wouldn’t it be just awesome to recognize, or be recognized by, your colleagues for projects that you have worked on. We are sure that there are projects that you have worked on that involves third-party vendors, consultants, service providers, clients, etc. – well, now you have a forum to send and receive recognition, in a beautiful form factor, that you can choose to display across your networks.

Imagine an employee review. You must have spent some time thinking through all the great stuff that you have done that you want to attach to your review form. And you may have, in your haste, forgotten some of the great stuff that you have done and been recognized for informally. So how cool would it be to print or email all the projects that you’ve worked on and the recognition you’ve received to your manager? How cool would it be to send all the people that you have recognized for their phenomenal work? For in the act of participating in the recognition ecosystem that our application provides you – you are an engaged and prized employee that any company would want to retain, nurture and develop.

Now imagine you are looking for a job. You have a resume. That is nice. And then the potential employer or recruiter is redirected to your professional networks and they have a glimpse of your recommendations and skill sets. That is nice too! But seriously…wouldn’t it be better for the hiring manager or recruiter to have a deeper insight into some of the projects that you have done and the recognition that you have received? Wouldn’t it be nice for them to see how active you are in recognizing great work of your other colleagues and project co-workers? Now they would have a more comprehensive idea of who you are and what makes you tick.

We help you build your professional brand and convey your accomplishments. That translates into greater internal development opportunities in your company, promotion, increase in pay, and it also makes you more marketable. We help you connect to high-achievers and forever manage your digital portfolio of achievements that can, at your request, exist in an open environment. JuggleStars.com is a great career management tool.

Check out www.jugglestars.com

.

Posted in Employee Engagement, Employee retention, Extrinsic Rewards, Innovation, Intrinsic Rewards, Leadership, Learning Organization, Learning Process, Motivation, Organization Architecture, Recognition, Rewards, Social Dynamics, Social Network, Social Systems, Talent Management

Tags: communication channel, conversation, crowdsource, employee engagement, employee recognition, extrinsic motivation, intrinsic motivation, learning organization, mass psychology, social network, social systems, talent management

The Political Campaign Juggernaut – What Obamney campaigns can teach Organizations!

Posted by Hindol Datta

The Presidential election is tomorrow. I shall not disclose my position, but I am a San Francisco/Bay Area Native. Any doubts who I most likely am inclined toward? Most likely not! But the campaign throughout the year got me thinking. Imagine … over $1.3B have been spent to either bash someone or to send a message out. Over $1.3B! I do not have the actual numbers, but what I do know is that about $1B was spent in 2008 and it is estimated that the total spend was at least 30% more for the 2012 campaign. That makes it one of the biggest annual marketing budgets. To put it in context, that is almost 50% more than what Apple spent on advertising in 2011 ($933M).

We are expecting about 100M people to vote. 100M people to give a like for either party. Now look at it this way. $1.3B suggests that the total presidential campaign budget would translate to over 400M clicks (assuming $3 per click) or over 650 billion impressions (assuming $2 per 1000 impressions). Of course, that is not actually the case because there is payroll, organization expenses, etc, etc, etc. But you get the point. It is a big big budget … and it is one of the very few budgets that tend to be managed very well. Despite the largesse, it does not take into account the volunteer base that goes into the campaigns.

Now the outcome associated with political campaigns is fairly concrete. Either you have put the money to good use, hence resulting in the election of the appropriate person or your money spent has not been good enough. Who do you fire? The person who loses either goes moves shop from White House or considers becoming the CEO of the next big thing – perhaps a public equity capital group. Either way, we can take some learnings from all that have transpired and apply it to organizations. Of course, most organizations do not have this massive budget but regardless … they do have substantial marketing budgets and so the question is: What can we learn from what we have seen in the political theater that would enable the organization to shape and landscape the customer and employee mindshare.

Here are a few key points:

1. Pounding the message: Organizations have to be focused on the end goal and ensure at all times that any and all message that is being delivered is being done to attain a set of key objectives that enables organization success. That means that there should be no ambiguity as to what the organization and its brand represents. Dilution of the message may open up pockets of undecided customers or employees that could vote with their wallet and their feet quite readily.

2. Creating advocacy groups: Organizations have to create and nurture product and message evangelists by placing these nodes across many fields where potential customers and employees may come in contact with the organization. That would mean almost all social media channels, offline channels, conferences, elicit testimonials, investor and public relations efforts, timing special news releases etc. Advocacy groups are a proxy for all channels that an organization must leverage.

3. Aspirational Inclinations: Sell a dream! Sell possibilities! Sell the Why Nots! People tend to converge upon a platform of optimism. Yet, organizations must also be able to short their competitor’s offerings or perhaps not mention them at all.

4. Polling the behavior: If you notice, political campaigns have taken a page out of Lean Startup methodology. If polls go haywire …resources and messages are tweaked to create a semblance of stability and to get back to desired radar frequencies. Tweaking of the message and the presence of the messenger becomes important. This is field deployment of solutions associated with what all the data intelligence gathered is telling you.

5. Super PACS and Angel Affiliates: You have limits as do all organizations! No problem! Create evangelists that are not directly on the take. These are folks that will push your culture to the furthest corners of the globe. So recognize them and support them. They carry the torch since they fully believe in your mission and that your organization outcomes will impact them positively. How? Let them know? Drill. Baby. Drilllll the message.

6. Electoral College wins, not popular polls: Focus on the profitable customers; get the very best employees. Stratify your business so that you buy the win. You may not have the most likes but you would have had enough among the strata that truly matters.

7. Give the final reason: Give customers and employees a reason to vote. You want them to vote for you, but all the same you still want them to vote. You want the market of ideas to expand, even though they may serve competing visions in the tapestry of organizations in your space. But in trying to harness the turnout to the polls, you will have done as well as you can to draw them to your mojo.

See you all possible voters in the polls tomorrow. Applaud and keep the flames of democracy alive.