Blog Archives

Chaos as a system: New Framework

Posted by Hindol Datta

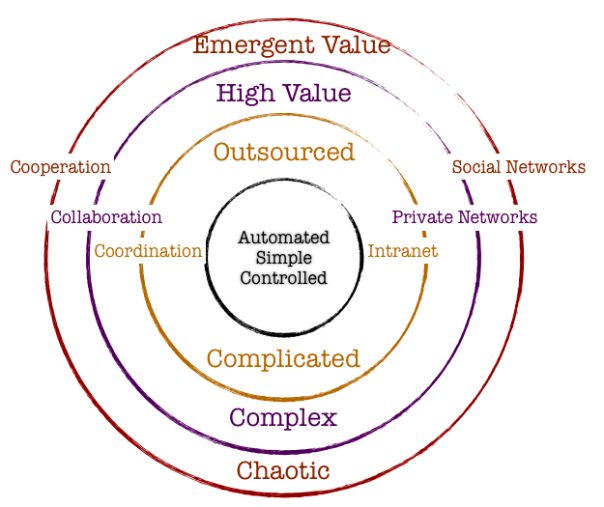

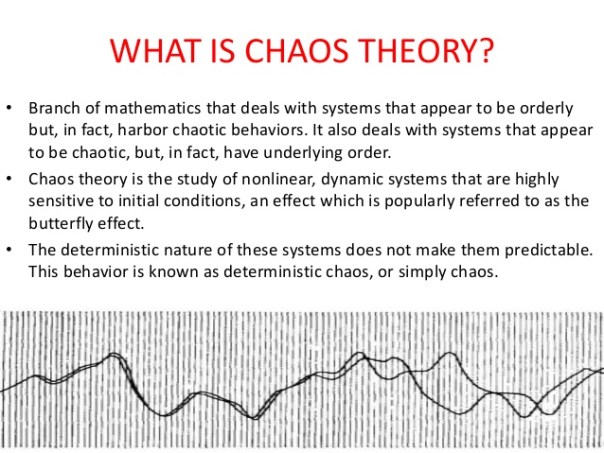

Chaos is not an unordered phenomenon. There is a certain homeostatic mechanism at play that forces a system that might have inherent characteristics of a “chaotic” process to converge to some sort of stability with respect to predictability and parallelism. Our understanding of order which is deemed to be opposite of chaos is the fact that there is a shared consensus that the system will behave in an expected manner. Hence, we often allude to systems as being “balanced” or “stable” or “in order” to spotlight these systems. However, it is also becoming common knowledge in the science of chaos that slight changes in initial conditions in a system can emit variability in the final output that might not be predictable. So how does one straddle order and chaos in an observed system, and what implications does this process have on ongoing study of such systems?

Chaotic systems can be considered to have a highly complex order. It might require the tools of pure mathematics and extreme computational power to understand such systems. These tools have invariably provided some insights into chaotic systems by visually representing outputs as re-occurrences of a distribution of outputs related to a given set of inputs. Another interesting tie up in this model is the existence of entropy, that variable that taxes a system and diminishes the impact on expected outputs. Any system acts like a living organism: it requires oodles of resources to survive and a well-established set of rules to govern its internal mechanism driving the vector of its movement. Suddenly, what emerges is the fact that chaotic systems display some order while subject to an inherent mechanism that softens its impact over time. Most approaches to studying complex and chaotic systems involve understanding graphical plots of fractal nature, and bifurcation diagrams. These models illustrate very complex re occurrences of outputs directly related to inputs. Hence, complex order occurs from chaotic systems.

A case in point would be the relation of a population parameter in the context to its immediate environment. It is argued that a population in an environment will maintain a certain number and there would be some external forces that will actively work to ensure that the population will maintain at that standard number. It is a very Malthusian analytic, but what is interesting is that there could be some new and meaningful influences on the number that might increase the scale. In our current meaning, a change in technology or ingenuity could significantly alter the natural homeostatic number. The fact remains that forces are always at work on a system. Some systems are autonomic – it self-organizes and corrects itself toward some stable convergence. Other systems are not autonomic and once can only resort to the laws of probability to get some insight into the possible outputs – but never to a point where there is a certainty in predictive prowess.

Organizations have a lot of interacting variables at play at any given moment. In order to influence the organization behavior or/and direction, policies might be formulated to bring about the desirable results. However, these nudges toward setting off the organization in the right direction might also lead to unexpected results. The aim is to foresee some of these unexpected results and mollify the adverse consequences while, in parallel, encourage the system to maximize the benefits. So how does one effect such changes?

It all starts with building out an operating framework. There needs to be a clarity around goals and what the ultimate purpose of the system is. Thus there are few objectives that bind the framework.

- Clarity around goals and the timing around achieving these goals. If there is no established time parameter, then the system might jump across various states over time and it would be difficult to establish an outcome.

- Evaluate all of the internal and external factors that might operate in the framework that would impact the success of organizational mandates and direction. Identify stasis or potential for stasis early since that mental model could stem the progress toward a desirable impact.

- Apply toll gates strategically to evaluate if the system is proceeding along the lines of expectation, and any early aberrations are evaluated and the rules are tweaked to get the system to track on a desirable trajectory.

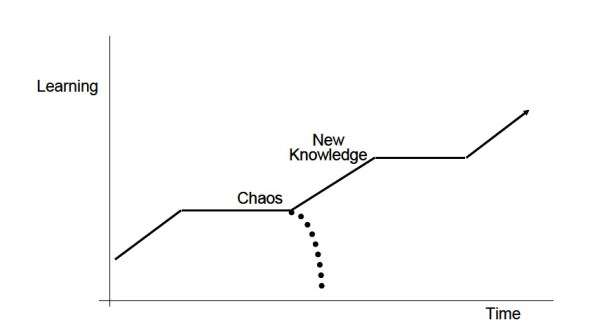

- Develop islands of learning across the path and engage the right talent and other parameters to force adaptive learning and therefore a more autonomic direction to the system.

- Bind the agents and actors in the organization to a shared sense of purpose within the parameter of time.

- Introduce diversity into the framework early in the process. The engagement of diversity allows the system to modulate around a harmonic mean.

- Finally, maintain a well document knowledge base such that the accretive learning that results due to changes in the organization become springboard for new initiatives that reduces the costs of potential failures or latency in execution.

- Encouraging the leadership to ensure that the vector is pointed toward the right direction at any given time.

Once a framework and the engagement rules are drawn out, it is necessary to rely on the natural velocity and self-organization of purposeful agents to move the agenda forward, hopefully with little or no intervention. A mechanism of feedback loops along the way would guide the efficacy of the direction of the system. The implications is that the strategy and the operations must be aligned and reevaluated and positive behavior is encouraged to ensure that the systems meets its objective.

However, as noted above, entropy is a dynamic that often threatens to derail the system objective. There will be external or internal forces constantly at work to undermine system velocity. The operating framework needs to anticipate that real possibility and pre-empt that with rules or introduction of specific capital to dematerialize these occurrences. Stasis is an active agent that can work against the system dynamic. Stasis is the inclination of agents or behaviors that anchors the system to some status quo – we have to be mindful that change might not be embraced and if there are resistors to that change, the dynamic of organizational change can be invariably impacted. It will take a lot more to get something done than otherwise needed. Identifying stasis and agents of stasis is a foundational element

While the above is one example of how to manage organizations in the shadows of the properties of how chaotic systems behave, another example would be the formulation of strategy of the organization in responses to external forces. How do we apply our learnings in chaos to deal with the challenges of competitive markets by aligning the internal organization to external factors? One of the key insights that chaos surfaces is that it is nigh impossible for one to fully anticipate all of the external variables, and leaving the system to dynamically adapt organically to external dynamics would allow the organization to thrive. To thrive in this environment is to provide the organization to rapidly change outside of the traditional hierarchical expectations: when organizations are unable to make those rapid changes or make strategic bets in response to the external systems, then the execution value of the organization diminishes.

Margaret Wheatley in her book Leadership and the New Science: Discovering Order in a Chaotic World Revised says, “Organizations lack this kind of faith, faith that they can accomplish their purposes in various ways and that they do best when they focus on direction and vision, letting transient forms emerge and disappear. We seem fixated on structures…and organizations, or we who create them, survive only because we build crafty and smart—smart enough to defend ourselves from the natural forces of destruction. Karl Weick, an organizational theorist, believes that “business strategies should be “just in time…supported by more investment in general knowledge, a large skill repertoire, the ability to do a quick study, trust in intuitions, and sophistication in cutting losses.”

We can expand the notion of a chaos in a system to embrace the bigger challenges associated with environment, globalization, and the advent of disruptive technologies.

One of the key challenges to globalization is how policy makers would balance that out against potential social disintegration. As policies emerge to acknowledge the benefits and the necessity to integrate with a new and dynamic global order, the corresponding impact to local institutions can vary and might even lead to some deleterious impact on those institutions. Policies have to encourage flexibility in local institutional capability and that might mean increased investments in infrastructure, creating a diverse knowledge base, establishing rules that govern free but fair trading practices, and encouraging the mobility of capital across borders. The grand challenges of globalization is weighed upon by government and private entities that scurry to create that continual balance to ensure that the local systems survive and flourish within the context of the larger framework. The boundaries of the system are larger and incorporates many more agents which effectively leads to the real possibility of systems that are difficult to be controlled via a hierarchical or centralized body politic Decision making is thus pushed out to the agents and actors but these work under a larger set of rules. Rigidity in rules and governance can amplify failures in this process.

Related to the realities of globalization is the advent of the growth in exponential technologies. Technologies with extreme computational power is integrating and create robust communication networks within and outside of the system: the system herein could represent nation-states or companies or industrialization initiatives. Will the exponential technologies diffuse across larger scales quickly and will the corresponding increase in adoption of new technologies change the future of the human condition? There are fears that new technologies would displace large groups of economic participants who are not immediately equipped to incorporate and feed those technologies into the future: that might be on account of disparity in education and wealth, institutional policies, and the availability of opportunities. Since technologies are exponential, we get a performance curve that is difficult for us to understand. In general, we tend to think linearly and this frailty in our thinking removes us from the path to the future sooner than later. What makes this difficult is that the exponential impact is occurring across various sciences and no one body can effectively fathom the impact and the direction. Bill Gates says it well “We always overestimate the change that will occur in the next two years and underestimate the change that will occur in the next ten. Don’t let yourself be lulled into inaction.” Does chaos theory and complexity science arm us with a differentiated tool set than the traditional toolset of strategy roadmaps and product maps? If society is being carried by the intractable and power of the exponent in advances in technology, than a linear map might not serve to provide the right framework to develop strategies for success in the long-term. Rather, a more collaborative and transparent roadmap to encourage the integration of thoughts and models among the actors who are adapting and adjusting dynamically by the sheer force of will would perhaps be an alternative and practical approach in the new era.

Lately there has been a lot of discussion around climate change. It has been argued, with good reason and empirical evidence, that environment can be adversely impacted on account of mass industrialization, increase in population, resource availability issues, the inability of the market system to incorporate the cost of spillover effects, the adverse impact of moral hazard and the theory of the commons, etc. While there are demurrers who contest the long-term climate change issues, the train seems to have already left the station! The facts do clearly reflect that the climate will be impacted. Skeptics might argue that science has not yet developed a precise predictive model of the weather system two weeks out, and it is foolhardy to conclude a dystopian future on climate fifty years out. However, the alternative argument is that our inability to exercise to explain the near-term effects of weather changes and turbulence does not negate the existence of climate change due to the accretion of greenhouse impact. Boiling a pot of water will not necessarily gives us an understanding of all of the convection currents involved among the water molecules, but it certainly does not shy away from the fact that the water will heat up.

Posted in Business Process, Chaos, Complexity, emergent systems, exponential, growth, Innovation, Leadership, Learning Organization, Learning Process, Model Thinking, Narratives, Order, Organization Architecture, scale, Social Dynamics, Social Systems

Comments Off on Chaos as a system: New Framework

Tags: chaos, Complexity, environment, innovation, learning organization, order, platform, systems, transparency

History of Chaos

Posted by Hindol Datta

| Chaos is inherent in all compounded things. Strive on with diligence! –Buddha |

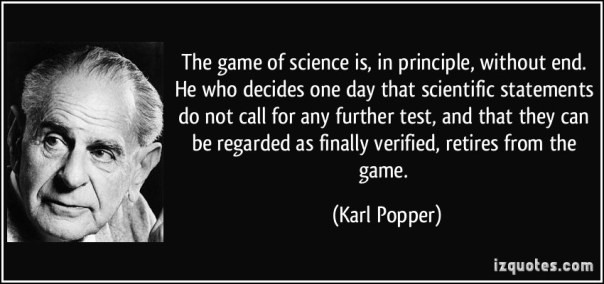

Scientific theories are characterized by the fact that they are open to refutation. To create a scientific model, there are three successive steps that one follows: observe the phenomenon, translate that into equations, and then solve the equations.

One of the early philosophers of science, Karl Popper (1902-1994) discussed this at great length in his book – The Logic of Scientific Discovery. He distinguishes scientific theories from metaphysical or mythological assertions. His main theses is that a scientific theory must be open to falsification: it has to be reproducible separately and yet one can gather data points that might refute the fundamental elements of theory. Developing a scientific theory in a manner that can be falsified by observations would result in new and more stable theories over time. Theories can be rejected in favor of a rival theory or a calibration of the theory in keeping with the new set of observations and outcomes that the theories posit. Until Popper’s time and even after, social sciences have tried to work on a framework that would allow the construction of models that would formulate some predictive laws that govern social dynamics. In his book, Poverty of Historicism, Popper maintained that such an endeavor is not fruitful since it does not take into consideration the myriad of minor elements that interact closely with one another in a meaningful way. Hence, he has touched indirectly on the concept of chaos and complexity and how it touches the scientific method. We will now journey into the past and through the present to understand the genesis of the theory and how it has been channelized by leading scientists and philosophers to decipher a framework for study society and nature.

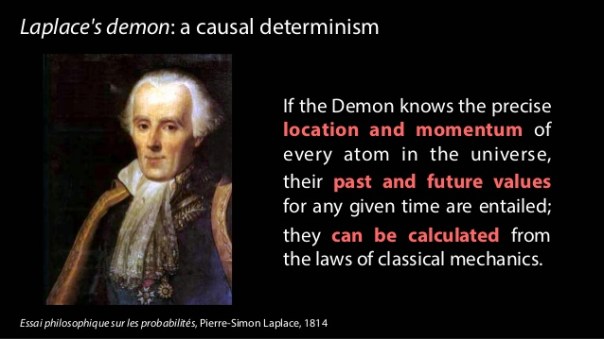

As we have already discussed, one of the main pillars of Science is determinism: the probability of prediction. It holds that every event is determined by natural laws. Nothing can happen without an unbroken chain of causes that can be traced all the way back to an initial condition. The deterministic nature of science goes all the way back to Aristotelian times. Interestingly, Aristotle argued that there is some degree of indeterminism and he relegated this to chance or accidents. Chance is a character that makes its presence felt in every plot in the human and natural condition. Aristotle wrote that “we do not have knowledge of a thing until we have grasped its why, that is to say, its cause.” He goes on to illustrate his idea in greater detail – namely, that the final outcome that we see in a system is on account of four kinds of influencers: Matter, Form, Agent and Purpose.

Matter is what constitutes the outcome. For a chair it might be wood. For a statue, it might be marble. The outcome is determined by what constitutes the outcome.

Form refers to the shape of the outcome. Thus, a carpenter or a sculptor would have a pre-conceived notion of the shape of the outcome and they would design toward that artifact.

Agent refers to the efficient cause or the act of producing the outcome. Carpentry or masonry skills would be important to shape the final outcome.

Finally, the outcome itself must serve a purpose on its own. For a chair, it might be something to sit on, for a statue it might be something to be marveled at.

However, Aristotle also admits that luck and chance can play an important role that do not fit the causal framework in its own right. Some things do happen by chance or luck. Chance is a rare event, it is a random event and it is typically brought out by some purposeful action or by nature.

We had briefly discussed the Laplace demon and he summarized this wonderfully: “We ought then to consider the resent state of the universe as the effect of its previous state and as the cause of that which is to follow. An intelligence that, at a given instant, could comprehend all the forces by which nature is animated and the respective situation of the beings that make it up if moreover it were vast enough to submit these data to analysis, would encompass in the same formula the movements of the greatest bodies of the universe and those of the lightest atoms. For such an intelligence nothing would be uncertain, and the future, like the past, would be open to its eyes.” He thus admits to the fact that we lack the vast intelligence and we are forced to use probabilities in order to get a sense of understanding of dynamical systems.

It was Maxwell in his pivotal book “Matter and Motion” published in 1876 lay the groundwork of chaos theory.

“There is a maxim which is often quoted, that “the same causes will always produce the same effects.’ To make this maxim intelligible we must define what we mean by the same causes and the same effects, since it is manifest that no event ever happens more than once, so that the causes and effects cannot be the same in all respects. There is another maxim which must not be confounded with that quoted at the beginning of this article, which asserts “That like causes produce like effects.” This is only true when small variations in the initial circumstances produce only small variations in the final state of the system. In a great many physical phenomena this condition is satisfied: but there are other cases in which a small initial variation may produce a great change in the final state of the system, as when the displacement of the points cause a railway train to run into another instead of keeping its proper course.” What is interesting however in the above quote is that Maxwell seems to go with the notion that in a great many cases there is no sensitivity to initial conditions.

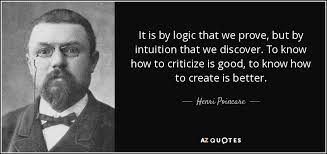

In the 1890’s Henri Poincare was the first exponent of chaos theory. He says “it may happen that small differences in the initial conditions produce very great ones in the final phenomena. A small error in the former will produce an enormous error in the latter. Prediction becomes impossible.” This was a far cry from the Newtonian world which sought order on how the solar system worked. Newton’s model was posted on the basis of the interaction between just two bodies. What would then happen if three bodies or N bodies were introduced into the model. This led to the rise of the Three Body Problem which led to Poincare embracing the notion that this problem could not be solved and can be tackled by approximate numerical techniques. Solving this resulted in solutions that were so tangled that is was difficult to not only draw them, it was near impossible to derive equations to fit the results. In addition, Poincare also discovered that if the three bodies started from slightly different initial positions, the orbits would trace out different paths. This led to Poincare forever being designated as the Father of Chaos Theory since he laid the groundwork on the most important element in chaos theory which is the sensitivity to initial dependence.

In the early 1960’s, the first true experimenter in chaos was a meteorologist named Edward Lorenz. He was working on a problem in weather prediction and he set up a system with twelve equations to model the weather. He set the initial conditions and the computer was left to predict what the weather might be. Upon revisiting this sequence later on, he inadvertently and by sheer accident, decided to run the sequence again in the middle and he noticed that the outcome was significantly different. The imminent question that followed was why the outcome was so different than the original. He traced this back to the initial condition wherein he noted that the initial input was different with respect to the decimal places. The system incorporated the all of the decimal places rather than the first three. (He had originally input the number .506 and he had concatenated the number from .506127). He would have expected that this thin variation in input would have created a sequence close to the original sequence but that was not to be: it was distinctly and hugely different. This effect became known as the Butterfly effect which is often substituted for Chaos Theory. Ian Stewart in his book, Does God Play Dice? The Mathematics of Chaos, describes this visually as follows:

“The flapping of a single butterfly’s wing today produces a tiny change in the state of the atmosphere. Over a period of time, what the atmosphere actually does diverges from what it would have done. So, in a month’s time, a tornado that would have devastated the Indonesian cost doesn’t happen. Or maybe one that wasn’t going to happen, does.”

Lorenz thus argued that it would be impossible to predict the weather accurately. However, he reduced his experiment to fewer set of equations and took upon observations of how small change in initial conditions affect predictability of smaller systems. He found a parallel – namely, that changes in initial conditions tends to render the final outcome of a system to be inaccurate. As he looked at alternative systems, he found a strange pattern that emerged – namely, that the system always represented a double spiral – the system never settled down to a single point but they never repeated its trajectory. It was a path breaking discovery that led to further advancement in the science of chaos in later years.

Years later, Robert May investigated how this impacts population. He established an equation that reflected a population growth and initialized the equation with a parameter for growth rate value. (The growth rate was initialized to 2.7). May found that as he increased the parameter value, the population grew which was expected. However, once he passed the 3.0 growth value, he noticed that equation would not settle down to a single population but branch out to two different values over time. If he raised the initial value more, the bifurcation or branching of the population would be twice as much or four different values. If he continued to increase the parameter, the lines continue to double until chaos appeared and it became hard to make point predictions.

There was another innate discovery that occurred through the experiment. When one visually looks at the bifurcation, one tends to see similarity between the small and large branches. This self-similarity became an important part of the development of chaos theory.

Benoit Mandelbrot started to study this self-similarity pattern in chaos. He was an economist and he applied mathematical equations to predict fluctuations in cotton prices. He noted that particular price changes were not predictable but there were certain patterns that were repeated and the degree of variation in prices had remained largely constant. This is suggestive of the fact that one might, upon preliminary reading of chaos, arrive at the notion that if weather cannot be predictable, then how can we predict climate many years out. On the contrary, Mandelbrot’s experiments seem to suggest that short time horizons are difficult to predict that long time horizon impact since systems tend to settle into some patterns that is reflecting of smaller patterns across periods. This led to the development of the concept of fractal dimensions, namely that sub-systems develop a symmetry to a larger system.

Feigenbaum was a scientist who became interested in how quickly bifurcations occur. He discovered that regardless of the scale of the system, the came at a constant rate of 4.669. If you reduce or enlarge the scale by that constant, you would see the mechanics at work which would lead to an equivalence in self-similarity. He applied this to a number of models and the same scaling constant took effect. Feigenbaum had established, for the first time, a universal constant around chaos theory. This was important because finding a constant in the realm of chaos theory was suggestive of the fact that chaos was an ordered process, not a random one.

Sir James Lighthill gave a lecture and in that he made an astute observation –

“We are all deeply conscious today that the enthusiasm of our forebears for the marvelous achievements of Newtonian mechanics led them to make generalizations in this area of predictability which, indeed, we may have generally tended to believe before 1960, but which we now recognize were false. We collectively wish to apologize for having misled the general educated public by spreading ideas about determinism of systems satisfying Newton’s laws of motion that, after 1960, were to be proved incorrect.”

Posted in Chaos, Complexity, emergent systems, Innovation, Learning Organization, Learning Process, Model Thinking, Social Systems

Comments Off on History of Chaos

Tags: adaptive system, chaos, Complexity, design, environment, innovation, learning organization, order, platform

Medici Effect – Encourage Innovation in the Organization

Posted by Hindol Datta

“Creativity is just connecting things. When you ask creative people how they did something, they feel a little guilty because they didn’t really do it, they just saw something. It seemed obvious to them after a while. That’s because they were able to connect experiences they’ve had and synthesize new things. And the reason they were able to do that was that they’ve had more experiences or they have thought more about their experiences than other people.”

– Steve Jobs

What is the Medici Effect?

Frans Johanssen has written a lovely book on the Medici Effect. The term “Medici” relates to the Medici family in Florence that made immense contributions in art, architecture and literature. They were pivotal in catalyzing the Renaissance, and some of the great artists and scientists that we revere today – Donatello, Michelangelo, Leonardo da Vinci, and Galileo were commissioned for their works by the family.

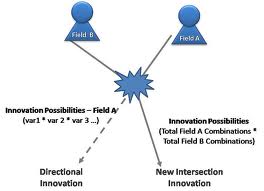

Renaissance was the resurgence of the old Athenian democracy. It merged distinctive areas of humanism, philosophy, sciences, arts and literature into a unified body of knowledge that would advance the cause of human civilization. What the Medici effect speaks to is the outcome that is the result of creating a system that would incorporate what on first glance, may seem distinctive and discrete disciplines, into holistic outcomes and a shared simmering of wisdom that permeated the emergence of new disciplines, thoughts and implementations.

Supporting the organization to harness the power of the Medici Effect

We are past the industrial era, the Progressive era and the Information era. There are no formative lines that truly distinguish one era from another, but our knowledge has progressed along gray lines that have pushed the limits of human knowledge. We are now wallowing in a crucible wherein distinct disciplines have crisscrossed and merged together. The key thesis in the Medici effect is that the intersections of these distinctive disciplines enable the birth of new breakthrough ideas and leapfrog innovation.

So how do we introduce the Medici Effect in organizations?

Some of the key ways to implement the model is really to provide the support infrastructure for

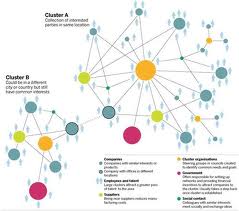

1. Connections: Our brains are naturally wired toward associations. We try to associate a concept with contextual elements around that concept to give the concept more meaning. We learn by connecting concepts and associating them, for the most part, with elements that we are conversant in. However, one can create associations within a narrow parameter, constrained within certain semantic models that we have created. Organizations can hence channelize connections by implementing narrow parameters. On the other hand, connections can be far more free-form. That means that the connector thinks beyond the immediate boundaries of their domain or within certain domains that are “pre-ordained”. In those cases, we create what is commonly known as divergent thinking. In that approach, we cull elements from seemingly different areas but we thread them around some core to generate new approaches, new metaphors, and new models. Ensuring that employees are able to safely reach out to other nodes of possibilities is the primary implementation step to generate the Medici effect.

2. Collaborations: Connecting different streams of thought in different disciplines is a primary and formative step. To advance this further, organization need to be able to provide additional systems wherein people can collaborate among themselves. In fact, the collaboration impact accentuates the final outcome sooner. So enabling connections and collaboration work in sync to create what I would call – the network impact on a marketplace of ideas.

3. Learning Organization: Organizations need to continuously add fuel to the ecosystem. In other words, they need to bring in speakers, encourage and invest in training programs, allow exploration possibilities by developing an internal budget for that purpose and provide some time and degree of freedom for people to mull over ideas. This enables collaboration to be enriched within the context of diverse learning.

4. Encourage Cultural Diversity: Finally, organizations have to invest in cultural diversity. People from different cultures have varied viewpoints and information and view issues from different perspectives and cultures. Given the fact that we are more globalized now, the innate understanding and immersion in cultural experience enhances the Medici effect. It also creates innovation and ground-breaking thoughts within a broader scope of compassion, humanism, social and shared responsibilities.

Implementing systems to encourage the Medici effect will enable organizations to break out from legacy behavior and trammel into unguarded territories. The charter toward unknown but exciting possibilities open the gateway for amazing and awesome ideas that engage the employees and enable them to beat a path to the intersection of new ideas.

Posted in Chaos, Employee Engagement, Innovation, Leadership, Learning Organization, Model Thinking, Motivation, Order, Organization Architecture, Social Dynamics, Social Network, Social Systems

Tags: boundaries, chaos, communication channel, creativity, crowdsource, discipline, diversity, employee engagement, experiments, intersection, learning organization, medici effect, social systems