Blog Archives

Navigating Chaos and Model Thinking

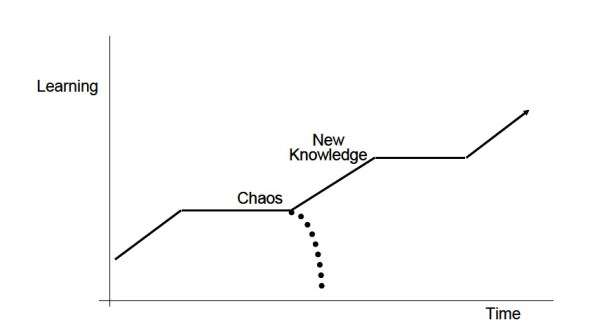

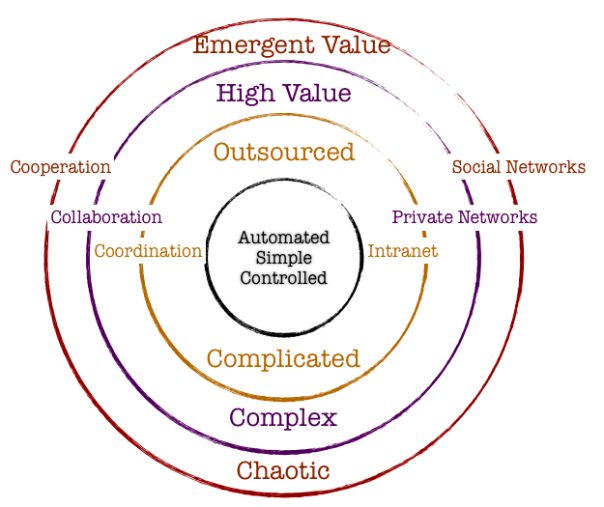

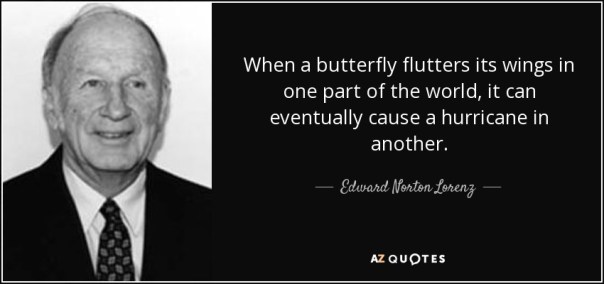

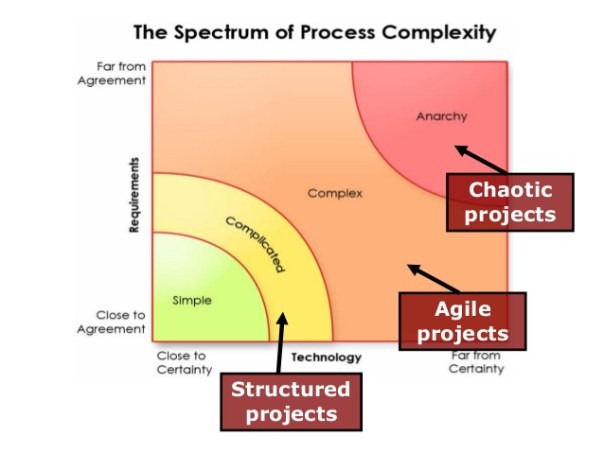

An inherent property of a chaotic system is that slight changes in initial conditions in the system result in a disproportionate change in outcome that is difficult to predict. Chaotic systems appear to create outcomes that appear to be random: they are generated by simple and non-random processes but the complexity of such systems emerge over time driven by numerous iterations of simple rules. The elements that compose chaotic systems might be few in number, but these elements work together to produce an intricate set of dynamics that amplifies the outcome and makes it hard to be predictable. These systems evolve over time, doing so according to rules and initial conditions and how the constituent elements work together.

Complex systems are characterized by emergence. The interactions between the elements of the system with its environment create new properties which influence the structural development of the system and the roles of the agents. In such systems there is self-organization characteristics that occur, and hence it is difficult to study and effect a system by studying the constituent parts that comprise it. The task becomes even more formidable when one faces the prevalent reality that most systems exhibit non-linear dynamics.

So how do we incorporate management practices in the face of chaos and complexity that is inherent in organization structure and market dynamics? It would be interesting to study this in light of the evolution of management principles in keeping with the evolution of scientific paradigms.

Newtonian Mechanics and Taylorism

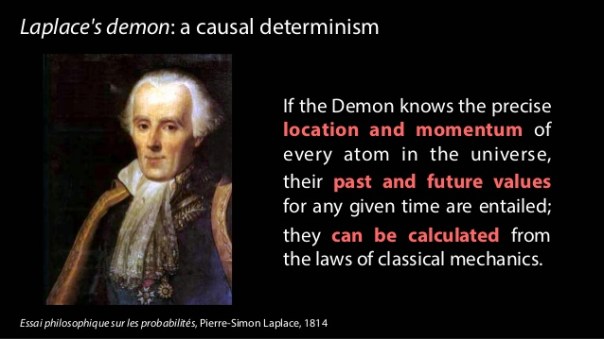

Traditional organization management has been heavily influenced by Newtonian mechanics. The five key assumptions of Newtonian mechanics are:

- Reality is objective

- Systems are linear and there is a presumption that all underlying cause and effect are linear

- Knowledge is empirical and acquired through collecting and analyzing data with the focus on surfacing regularities, predictability and control

- Systems are inherently efficient. Systems almost always follows the path of least resistance

- If inputs and process is managed, the outcomes are predictable

Frederick Taylor is the father of operational research and his methods were deployed in automotive companies in the 1940’s. Workers and processes are input elements to ensure that the machine functions per expectations. There was a linearity employed in principle. Management role was that of observation and control and the system would best function under hierarchical operating principles. Mass and efficient production were the hallmarks of management goal.

Randomness and the Toyota Way

The randomness paradigm recognized uncertainty as a pervasive constant. The various methods that Toyota Way invoked around 5W rested on the assumption that understanding the cause and effect is instrumental and this inclined management toward a more process-based deployment. Learning is introduced in this model as a dynamic variable and there is a lot of emphasis on the agents and providing them the clarity and purpose of their tasks. Efficiencies and quality are presumably driven by the rank and file and autonomous decisions are allowed. The management principle moves away from hierarchical and top-down to a more responsibility driven labor force.

Complexity and Chaos and the Nimble Organization

Increasing complexity has led to more demands on the organization. With the advent of social media and rapid information distribution and a general rise in consciousness around social impact, organizations have to balance out multiple objectives. Any small change in initial condition can lead to major outcomes: an advertising mistake can become a global PR nightmare; a word taken out of context could have huge ramifications that might immediately reflect on the stock price; an employee complaint could force management change. Increasing data and knowledge are not sufficient to ensure long-term success. In fact, there is no clear recipe to guarantee success in an age fraught with non-linearity, emergence and disequilibrium. To succeed in this environment entails the development of a learning organization that is not governed by fixed top-down rules: rather the rules are simple and the guidance is around the purpose of the system or the organization. It is best left to intellectual capital to self-organize rapidly in response to external information to adapt and make changes to ensure organization resilience and success.

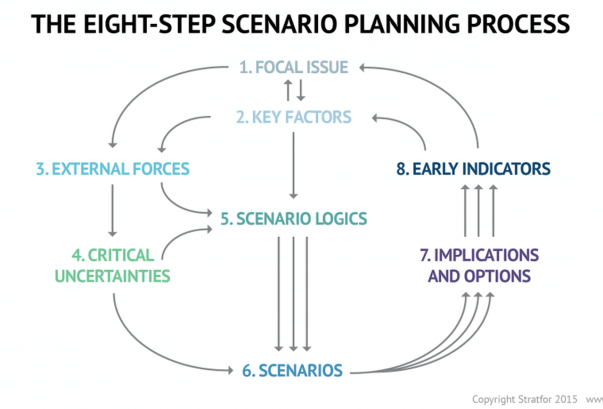

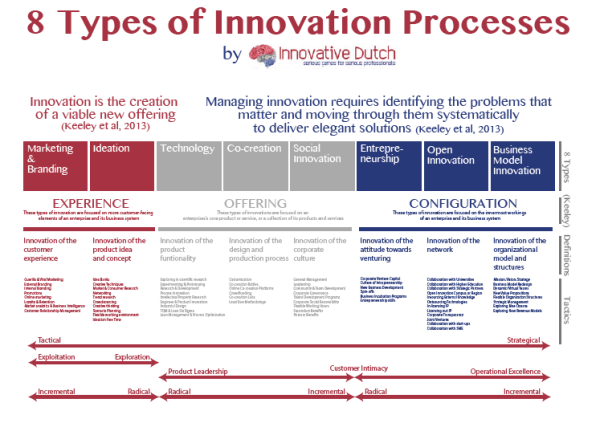

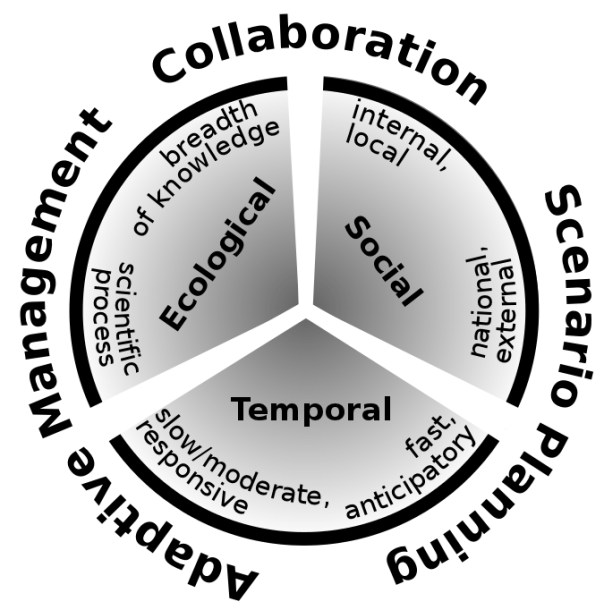

Companies are dynamic non-linear adaptive systems. The elements in the system are constantly interacting between themselves and their external environment. This creates new emergent properties that are sensitive to the initial conditions. A change in purpose or strategic positioning could set a domino effect and can lead to outcomes that are not predictable. Decisions are pushed out to all levels in the organization, since the presumption is that local and diverse knowledge that spontaneously emerge in response to stimuli is a superior structure than managing for complexity in a centralized manner. Thus, methods that can generate ideas, create innovation habitats, and embrace failures as providing new opportunities to learn are best practices that companies must follow. Traditional long-term planning and forecasting is becoming a far harder exercise and practically impossible. Thus, planning is more around strategic mindset, scenario planning, allowing local rules to auto generate without direct supervision, encourage dissent and diversity, stimulate creativity and establishing clarity of purpose and broad guidelines are the hall marks of success.

Principles of Leadership in a New Age

We have already explored the fact that traditional leadership models originated in the context of mass production and efficiencies. These models are arcane in our information era today, where systems are characterized by exponential dynamism of variables, increased density of interactions, increased globalization and interconnectedness, massive information distribution at increasing rapidity, and a general toward economies driven by free will of the participants rather than a central authority.

Complexity Leadership Theory (Uhl-Bien) is a “framework for leadership that enables the learning, creative and adaptive capacity of complex adaptive systems in knowledge-producing organizations or organizational units. Since planning for the long-term is virtually impossible, Leadership has to be armed with different tool sets to steer the organization toward achieving its purpose. Leaders take on enabler role rather than controller role: empowerment supplants control. Leadership is not about focus on traits of a single leader: rather, it redirects emphasis from individual leaders to leadership as an organizational phenomenon. Leadership is a trait rather than an individual. We recognize that complex systems have lot of interacting agents – in business parlance, which might constitute labor and capital. Introducing complexity leadership is to empower all of the agents with the ability to lead their sub-units toward a common shared purpose. Different agents can become leaders in different roles as their tasks or roles morph rapidly: it is not necessarily defined by a formal appointment or knighthood in title.

Thus, complexity of our modern-day reality demands a new strategic toolset for the new leader. The most important skills would be complex seeing, complex thinking, complex knowing, complex acting, complex trusting and complex being. (Elena Osmodo, 2012)

Complex Seeing: Reality is inherently subjective. It is a page of the Heisenberg Uncertainty principle that posits that the independence between the observer and the observed is not real. If leaders are not aware of this independence, they run the risk of engaging in decisions that are fraught with bias. They will continue to perceive reality with the same lens that they have perceived reality in the past, despite the fact that undercurrents and riptides of increasingly exponential systems are tearing away their “perceived reality.” Leader have to be conscious about the tectonic shifts, reevaluate their own intentions, probe and exclude biases that could cloud the fidelity of their decisions, and engage in a continuous learning process. The ability to sift and see through this complexity sets the initial condition upon which the entire system’s efficacy and trajectory rests.

Complex Thinking: Leaders have to be cognizant of falling prey to linear simple cause and effect thinking. On the contrary, leaders have to engage in counter-intuitive thinking, brainstorming and creative thinking. In addition, encouraging dissent, debates and diversity encourage new strains of thought and ideas.

Complex Feeling: Leaders must maintain high levels of energy and be optimistic of the future. Failures are not scoffed at; rather they are simply another window for learning. Leaders have to promote positive and productive emotional interactions. The leaders are tasked to increase positive feedback loops while reducing negative feedback mechanisms to the extent possible. Entropy and attrition taxes any system as is: the leader’s job is to set up safe environment to inculcate respect through general guidelines and leading by example.

Complex Knowing: Leadership is tasked with formulating simple rules to enable learned and quicker decision making across the organization. Leaders must provide a common purpose, interconnect people with symbols and metaphors, and continually reiterate the raison d’etre of the organization. Knowing is articulating: leadership has to articulate and be humble to any new and novel challenges and counterfactuals that might arise. The leader has to establish systems of knowledge: collective learning, collaborative learning and organizational learning. Collective learning is the ability of the collective to learn from experiences drawn from the vast set of individual actors operating in the system. Collaborative learning results due to interaction of agents and clusters in the organization. Learning organization, as Senge defines it, is “where people continually expand their capacity to create the results they truly desire, where new and expansive patterns of thinking are nurtured, where collective aspirations are set free, and where people are continually learning to see the whole together.”

Complex Acting: Complex action is the ability of the leader to not only work toward benefiting the agents in his/her purview, but also to ensure that the benefits resonates to a whole which by definition is greater than the sum of the parts. Complex acting is to take specific action-oriented steps that largely reflect the values that the organization represents in its environmental context.

Complex Trusting: Decentralization requires conferring power to local agents. For decentralization to work effectively, leaders have to trust that the agents will, in the aggregate, work toward advancing the organization. The cost of managing top-down is far more than the benefits that a trust-based decentralized system would work in a dynamic environment resplendent with the novelty of chaos and complexity.

Complex Being: This is the ability of the leaser to favor and encourage communication across the organization rapidly. The leader needs to encourage relationships and inter-functional dialogue.

The role of complex leaders is to design adaptive systems that are able to cope with challenging and novel environments by establishing a few rules and encouraging agents to self-organize autonomously at local levels to solve challenges. The leader’s main role in this exercise is to set the strategic directions and the guidelines and let the organizations run.

Chaos and the tide of Entropy!

We have discussed chaos. It is rooted in the fundamental idea that small changes in the initial condition in a system can amplify the impact on the final outcome in the system. Let us now look at another sibling in systems literature – namely, the concept of entropy. We will then attempt to bridge these two concepts since they are inherent in all systems.

Entropy arises from the law of thermodynamics. Let us state all three laws:

- First law is known as the Lay of Conservation of Energy which states that energy can neither be created nor destroyed: energy can only be transferred from one form to another. Thus, if there is work in terms of energy transformation in a system, there is equivalent loss of energy transformation around the system. This fact balances the first law of thermodynamics.

- Second law of thermodynamics states that the entropy of any isolated system always increases. Entropy always increases, and rarely ever decreases. If a locker room is not tidied, entropy dictates that it will become messier and more disorderly over time. In other words, all systems that are stagnant will inviolably run against entropy which would lead to its undoing over time. Over time the state of disorganization increases. While energy cannot be created or destroyed, as per the First Law, it certainly can change from useful energy to less useful energy.

- Third law establishes that the entropy of a system approaches a constant value as the temperature approaches absolute zero. Thus, the entropy of a pure crystalline substance at absolute zero temperature is zero. However, if there is any imperfection that resides in the crystalline structure, there will be some entropy that will act upon it.

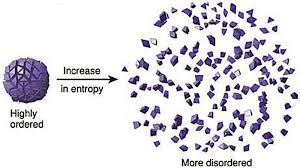

Entropy refers to a measure of disorganization. Thus people in a crowd that is widely spread out across a large stadium has high entropy whereas it would constitute low entropy if people are all huddled in one corner of the stadium. Entropy is the quantitative measure of the process – namely, how much energy has been spent from being localized to being diffused in a system. Entropy is enabled by motion or interaction of elements in a system, but is actualized by the process of interaction. All particles work toward spontaneously dissipating their energy if they are not curtailed from doing so. In other words, there is an inherent will, philosophically speaking, of a system to dissipate energy and that process of dissipation is entropy. However, it makes no effort to figure out how quickly entropy kicks into gear – it is this fact that makes it difficult to predict the overall state of the system.

Chaos, as we have already discussed, makes systems unpredictable because of perturbations in the initial state. Entropy is the dissipation of energy in the system, but there is no standard way of knowing the parameter of how quickly entropy would set in. There are thus two very interesting elements in systems that almost work simultaneously to ensure that predictability of systems become harder.

Another way of looking at entropy is to view this as a tax that the system charges us when it goes to work on our behalf. If we are purposefully calibrating a system to meet a certain purpose, there is inevitably a corresponding usage of energy or dissipation of energy otherwise known as entropy that is working in parallel. A common example that we are familiar with is mass industrialization initiatives. Mass industrialization has impacts on environment, disease, resource depletion, and a general decay of life in some form. If entropy as we understand it is an irreversible phenomenon, then there is virtually nothing that can be done to eliminate it. It is a permanent tax of varying magnitude in the system.

Humans have since early times have tried to formulate a working framework of the world around them. To do that, they have crafted various models and drawn upon different analogies to lend credence to one way of thinking over another. Either way, they have been left best to wrestle with approximations: approximations associated with their understanding of the initial conditions, approximations on model mechanics, approximations on the tax that the system inevitably charges, and the approximate distribution of potential outcomes. Despite valiant efforts to reduce the framework to physical versus behavioral phenomena, their final task of creating or developing a predictable system has not worked. While physical laws of nature describe physical phenomena, the behavioral laws describe non-deterministic phenomena. If linear equations are used as tools to understand the physical laws following the principles of classical Newtonian mechanics, the non-linear observations marred any consistent and comprehensive framework for clear understanding. Entropy reaches out toward an irreversible thermal death: there is an inherent fatalism associated with the Second Law of Thermodynamics. However, if that is presumed to be the case, how is it that human evolution has jumped across multiple chasms and have evolved to what it is today? If indeed entropy is the tax, one could argue that chaos with its bounded but amplified mechanics have allowed the human race to continue.

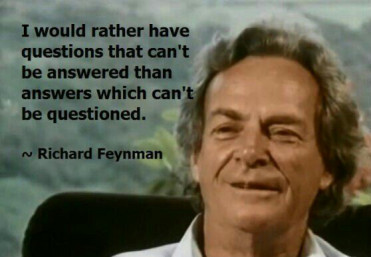

Let us now deliberate on this observation of Richard Feynmann, a Nobel Laurate in physics – “So we now have to talk about what we mean by disorder and what we mean by order. … Suppose we divide the space into little volume elements. If we have black and white molecules, how many ways could we distribute them among the volume elements so that white is on one side and black is on the other? On the other hand, how many ways could we distribute them with no restriction on which goes where? Clearly, there are many more ways to arrange them in the latter case.

We measure “disorder” by the number of ways that the insides can be arranged, so that from the outside it looks the same. The logarithm of that number of ways is the entropy. The number of ways in the separated case is less, so the entropy is less, or the “disorder” is less.” It is commonly also alluded to as the distinction between microstates and macrostates. Essentially, it says that there could be innumerable microstates although from an outsider looking in – there is only one microstate. The number of microstates hints at the system having more entropy.

In a different way, we ran across this wonderful example: A professor distributes chocolates to students in the class. He has 35 students but he distributes 25 chocolates. He throws those chocolates to the students and some students might have more than others. The students do not know that the professor had only 25 chocolates: they have presumed that there were 35 chocolates. So the end result is that the students are disconcerted because they perceive that the other students have more chocolates than they have distributed but the system as a whole shows that there are only 25 chocolates. Regardless of all of the ways that the 25 chocolates are configured among the students, the microstate is stable.

So what is Feynmann and the chocolate example suggesting for our purpose of understanding the impact of entropy on systems: Our understanding is that the reconfiguration or the potential permutations of elements in the system that reflect the various microstates hint at higher entropy but in reality has no impact on the microstate per se except that the microstate has inherently higher entropy. Does this mean that the macrostate thus has a shorter life-span? Does this mean that the microstate is inherently more unstable? Could this mean an exponential decay factor in that state? The answer to all of the above questions is not always. Entropy is a physical phenomenon but to abstract this out to enable a study of organic systems that represent super complex macrostates and arrive at some predictable pattern of decay is a bridge too far! If we were to strictly follow the precepts of the Second Law and just for a moment forget about Chaos, one could surmise that evolution is not a measure of progress, it is simply a reconfiguration.

Theodosius Dobzhansky, a well known physicist, says: “Seen in retrospect, evolution as a whole doubtless had a general direction, from simple to complex, from dependence on to relative independence of the environment, to greater and greater autonomy of individuals, greater and greater development of sense organs and nervous systems conveying and processing information about the state of the organism’s surroundings, and finally greater and greater consciousness. You can call this direction progress or by some other name.”

Harold Mosowitz says “Life is organization. From prokaryotic cells, eukaryotic cells, tissues and organs, to plants and animals, families, communities, ecosystems, and living planets, life is organization, at every scale. The evolution of life is the increase of biological organization, if it is anything. Clearly, if life originates and makes evolutionary progress without organizing input somehow supplied, then something has organized itself. Logical entropy in a closed system has decreased. This is the violation that people are getting at, when they say that life violates the second law of thermodynamics. This violation, the decrease of logical entropy in a closed system, must happen continually in the Darwinian account of evolutionary progress.”

Entropy occurs in all systems. That is an indisputable fact. However, if we start defining boundaries, then we are prone to see that these bounded systems decay faster. However, if we open up the system to leave it unbounded, then there are a lot of other forces that come into play that is tantamount to some net progress. While it might be true that energy balances out, what we miss as social scientists or model builders or avid students of systems – we miss out on indices that reflect on leaps in quality and resilience and a horde of other factors that stabilizes the system despite the constant and ominous presence of entropy’s inner workings.

Internal versus External Scale

This article discusses internal and external complexity before we tee up a more detailed discussion on internal versus external scale. This chapter acknowledges that complex adaptive systems have inherent internal and external complexities which are not additive. The impact of these complexities is exponential. Hence, we have to sift through our understanding and perhaps even review the salient aspects of complexity science which have already been covered in relatively more detail in earlier chapter. However, revisiting complexity science is important, and we will often revisit this across other blog posts to really hit home the fundamental concepts and its practical implications as it relates to management and solving challenges at a business or even a grander social scale.

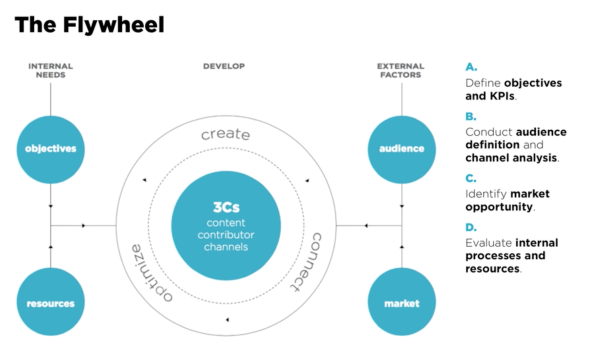

A complex system is a part of a larger environment. It is a safe to say that the larger environment is more complex than the system itself. But for the complex system to work, it needs to depend upon a certain level of predictability and regularity between the impact of initial state and the events associated with it or the interaction of the variables in the system itself. Note that I am covering both – complex physical systems and complex adaptive systems in this discussion. A system within an environment has an important attribute: it serves as a receptor to signals of external variables of the environment that impact the system. The system will either process that signal or discard the signal which is largely based on what the system is trying to achieve. We will dedicate an entire article on system engineering and thinking later, but the uber point is that a system exists to serve a definite purpose. All systems are dependent on resources and exhibits a certain capacity to process information. Hence, a system will try to extract as many regularities as possible to enable a predictable dynamic in an efficient manner to fulfill its higher-level purpose.

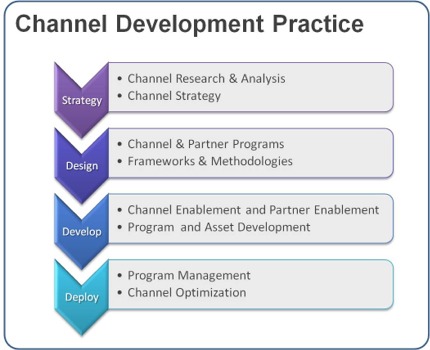

Let us understand external complexities. We can interchangeably use the word environmental complexity as well. External complexity represents physical, cultural, social, and technological elements that are intertwined. These environments beleaguered with its own grades of complexity acts as a mold to affect operating systems that are mere artifacts. If operating systems can fit well within the mold, then there is a measure of fitness or harmony that arises between an internal complexity and external complexity. This is the root of dynamic adaptation. When external environments are very complex, that means that there are a lot of variables at play and thus, an internal system has to process more information in order to survive. So how the internal system will react to external systems is important and they key bridge between those two systems is in learning. Does the system learn and improve outcomes on account of continuous learning and does it continually modify its existing form and functional objectives as it learns from external complexity? How is the feedback loop monitored and managed when one deals with internal and external complexities? The environment generates random problems and challenges and the internal system has to accept or discard these problems and then establish a process to distribute the problems among its agents to efficiently solve those problems that it hopes to solve for. There is always a mechanism at work which tries to align the internal complexity with external complexity since it is widely believed that the ability to efficiently align the systems is the key to maintaining a relatively competitive edge or intentionally making progress in solving a set of important challenges.

Internal complexity are sub-elements that interact and are constituents of a system that resides within the larger context of an external complex system or the environment. Internal complexity arises based on the number of variables in the system, the hierarchical complexity of the variables, the internal capabilities of information pass-through between the levels and the variables, and finally how it learns from the external environment. There are five dimensions of complexity: interdependence, diversity of system elements, unpredictability and ambiguity, the rate of dynamic mobility and adaptability, and the capability of the agents to process information and their individual channel capacities.

If we are discussing scale management, we need to ask a fundamental question. What is scale in the context of complex systems? Why do we manage for scale? How does management for scale advance us toward a meaningful outcome? How does scale compute in internal and external complex systems? What do we expect to see if we have managed for scale well? What does the future bode for us if we assume that we have optimized for scale and that is the key objective function that we have to pursue?