Blog Archives

Chaos and the tide of Entropy!

We have discussed chaos. It is rooted in the fundamental idea that small changes in the initial condition in a system can amplify the impact on the final outcome in the system. Let us now look at another sibling in systems literature – namely, the concept of entropy. We will then attempt to bridge these two concepts since they are inherent in all systems.

Entropy arises from the law of thermodynamics. Let us state all three laws:

- First law is known as the Lay of Conservation of Energy which states that energy can neither be created nor destroyed: energy can only be transferred from one form to another. Thus, if there is work in terms of energy transformation in a system, there is equivalent loss of energy transformation around the system. This fact balances the first law of thermodynamics.

- Second law of thermodynamics states that the entropy of any isolated system always increases. Entropy always increases, and rarely ever decreases. If a locker room is not tidied, entropy dictates that it will become messier and more disorderly over time. In other words, all systems that are stagnant will inviolably run against entropy which would lead to its undoing over time. Over time the state of disorganization increases. While energy cannot be created or destroyed, as per the First Law, it certainly can change from useful energy to less useful energy.

- Third law establishes that the entropy of a system approaches a constant value as the temperature approaches absolute zero. Thus, the entropy of a pure crystalline substance at absolute zero temperature is zero. However, if there is any imperfection that resides in the crystalline structure, there will be some entropy that will act upon it.

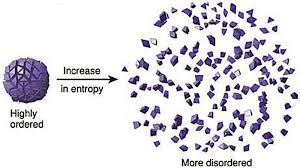

Entropy refers to a measure of disorganization. Thus people in a crowd that is widely spread out across a large stadium has high entropy whereas it would constitute low entropy if people are all huddled in one corner of the stadium. Entropy is the quantitative measure of the process – namely, how much energy has been spent from being localized to being diffused in a system. Entropy is enabled by motion or interaction of elements in a system, but is actualized by the process of interaction. All particles work toward spontaneously dissipating their energy if they are not curtailed from doing so. In other words, there is an inherent will, philosophically speaking, of a system to dissipate energy and that process of dissipation is entropy. However, it makes no effort to figure out how quickly entropy kicks into gear – it is this fact that makes it difficult to predict the overall state of the system.

Chaos, as we have already discussed, makes systems unpredictable because of perturbations in the initial state. Entropy is the dissipation of energy in the system, but there is no standard way of knowing the parameter of how quickly entropy would set in. There are thus two very interesting elements in systems that almost work simultaneously to ensure that predictability of systems become harder.

Another way of looking at entropy is to view this as a tax that the system charges us when it goes to work on our behalf. If we are purposefully calibrating a system to meet a certain purpose, there is inevitably a corresponding usage of energy or dissipation of energy otherwise known as entropy that is working in parallel. A common example that we are familiar with is mass industrialization initiatives. Mass industrialization has impacts on environment, disease, resource depletion, and a general decay of life in some form. If entropy as we understand it is an irreversible phenomenon, then there is virtually nothing that can be done to eliminate it. It is a permanent tax of varying magnitude in the system.

Humans have since early times have tried to formulate a working framework of the world around them. To do that, they have crafted various models and drawn upon different analogies to lend credence to one way of thinking over another. Either way, they have been left best to wrestle with approximations: approximations associated with their understanding of the initial conditions, approximations on model mechanics, approximations on the tax that the system inevitably charges, and the approximate distribution of potential outcomes. Despite valiant efforts to reduce the framework to physical versus behavioral phenomena, their final task of creating or developing a predictable system has not worked. While physical laws of nature describe physical phenomena, the behavioral laws describe non-deterministic phenomena. If linear equations are used as tools to understand the physical laws following the principles of classical Newtonian mechanics, the non-linear observations marred any consistent and comprehensive framework for clear understanding. Entropy reaches out toward an irreversible thermal death: there is an inherent fatalism associated with the Second Law of Thermodynamics. However, if that is presumed to be the case, how is it that human evolution has jumped across multiple chasms and have evolved to what it is today? If indeed entropy is the tax, one could argue that chaos with its bounded but amplified mechanics have allowed the human race to continue.

Let us now deliberate on this observation of Richard Feynmann, a Nobel Laurate in physics – “So we now have to talk about what we mean by disorder and what we mean by order. … Suppose we divide the space into little volume elements. If we have black and white molecules, how many ways could we distribute them among the volume elements so that white is on one side and black is on the other? On the other hand, how many ways could we distribute them with no restriction on which goes where? Clearly, there are many more ways to arrange them in the latter case.

We measure “disorder” by the number of ways that the insides can be arranged, so that from the outside it looks the same. The logarithm of that number of ways is the entropy. The number of ways in the separated case is less, so the entropy is less, or the “disorder” is less.” It is commonly also alluded to as the distinction between microstates and macrostates. Essentially, it says that there could be innumerable microstates although from an outsider looking in – there is only one microstate. The number of microstates hints at the system having more entropy.

In a different way, we ran across this wonderful example: A professor distributes chocolates to students in the class. He has 35 students but he distributes 25 chocolates. He throws those chocolates to the students and some students might have more than others. The students do not know that the professor had only 25 chocolates: they have presumed that there were 35 chocolates. So the end result is that the students are disconcerted because they perceive that the other students have more chocolates than they have distributed but the system as a whole shows that there are only 25 chocolates. Regardless of all of the ways that the 25 chocolates are configured among the students, the microstate is stable.

So what is Feynmann and the chocolate example suggesting for our purpose of understanding the impact of entropy on systems: Our understanding is that the reconfiguration or the potential permutations of elements in the system that reflect the various microstates hint at higher entropy but in reality has no impact on the microstate per se except that the microstate has inherently higher entropy. Does this mean that the macrostate thus has a shorter life-span? Does this mean that the microstate is inherently more unstable? Could this mean an exponential decay factor in that state? The answer to all of the above questions is not always. Entropy is a physical phenomenon but to abstract this out to enable a study of organic systems that represent super complex macrostates and arrive at some predictable pattern of decay is a bridge too far! If we were to strictly follow the precepts of the Second Law and just for a moment forget about Chaos, one could surmise that evolution is not a measure of progress, it is simply a reconfiguration.

Theodosius Dobzhansky, a well known physicist, says: “Seen in retrospect, evolution as a whole doubtless had a general direction, from simple to complex, from dependence on to relative independence of the environment, to greater and greater autonomy of individuals, greater and greater development of sense organs and nervous systems conveying and processing information about the state of the organism’s surroundings, and finally greater and greater consciousness. You can call this direction progress or by some other name.”

Harold Mosowitz says “Life is organization. From prokaryotic cells, eukaryotic cells, tissues and organs, to plants and animals, families, communities, ecosystems, and living planets, life is organization, at every scale. The evolution of life is the increase of biological organization, if it is anything. Clearly, if life originates and makes evolutionary progress without organizing input somehow supplied, then something has organized itself. Logical entropy in a closed system has decreased. This is the violation that people are getting at, when they say that life violates the second law of thermodynamics. This violation, the decrease of logical entropy in a closed system, must happen continually in the Darwinian account of evolutionary progress.”

Entropy occurs in all systems. That is an indisputable fact. However, if we start defining boundaries, then we are prone to see that these bounded systems decay faster. However, if we open up the system to leave it unbounded, then there are a lot of other forces that come into play that is tantamount to some net progress. While it might be true that energy balances out, what we miss as social scientists or model builders or avid students of systems – we miss out on indices that reflect on leaps in quality and resilience and a horde of other factors that stabilizes the system despite the constant and ominous presence of entropy’s inner workings.